User:Manetta/graduation-proposals/proposal-1.0: Difference between revisions

(Created page with "<div style="width:100%;max-width:1000px;"> =<span style="color:black;">graduation proposal +1.0</span>= == title: "i could have written that" == File:I-could-have-written-...") |

No edit summary |

||

| Line 1: | Line 1: | ||

<div style="width:100%;max-width: | <div style="width:100%;max-width:900px;"> | ||

=<span style="color:black;">graduation proposal +1.0</span>= | =<span style="color:black;">graduation proposal +1.0</span>= | ||

| Line 14: | Line 14: | ||

==context== | ==context== | ||

[[File:World-Well-Being-Project-wordclouds.gif|thumbnail|right|200px|wordclouds | [[File:World-Well-Being-Project-wordclouds.gif|thumbnail|right|200px|wordclouds visualizing text-mining research results; from [http://www.wwbp.org/ the World Well Being Project] ]] | ||

[[File:Antropomorphic-reading-terms.gif|thumbnail|right|200px|antropomorphism used as a tool to relate to computer processes]] | [[File:Antropomorphic-reading-terms.gif|thumbnail|right|200px|antropomorphism used as a tool to relate to computer processes]] | ||

Revision as of 00:22, 12 November 2015

graduation proposal +1.0

title: "i could have written that"

Introduction

For in those realms machines are made to behave in wondrous ways, often sufficient to dazzle even the most experienced observer. But once a particular program is unmasked, once its inner workings are explained in language sufficiently plain to induice understanding, its magic crumbles away; it stands revealed as a mere collection of procedures, each quite comprehensible. The observer says to himself "I could have written that". With that thought he moves the program in question from the shelf marked "intelligent" to that reserved for curious, fit to be discussed only with people less enlightened than he. (Joseph Weizenbaum, 1966)

abstract

'i-could-have-written-that' will be a publishing project around technologies that process natural language (NLP). The project will put tools and techniques central, which will mainly be computer software. By regarding NLP software as cultural objects, i would like to look at the inner workings of their technologies: how do they systemize our natural language? This could create a space for alternative perspectives on software culture, as opposed to an attitude of accepting that software 'just works'.

This approach will hopefully lead to conversations that are not limited to technological views, but also reflect on cultural & political implications.

context

importance?

In current reactions on the effects of an optimistic believe in software, i recognize a gap:

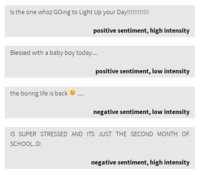

- on the one hand there is an objective attitude, that regards data-mining results as information or even 'knowledge'; Because of big-data's ideological aims to be in direct contact with information, there is a believe that 'truth' can be gained from data without any mediation: as it is the data that speaks[1] or no humans are every involved[2].

- and on the other hand i recognize a habit to apply antropomorphic qualities to software (like 'thinking' or 'machine learning'), which can easily obscure and mislead their syntactical nature.

This could be avoided by a collection of alternative perspectives on these techniques, made accessible and legible for people with an interest to regard software as a cultural product. A choice for a focus on specifically software that processes natural language, is both a poetic choice, as also a way to speak directly with the techniques that create information using, excecuting & processing language*. Then it would hopefully be possible to formulate an opinion or understanding about computational (syntactical) techniques without relying only on the main (and overpowering) perspective.**

- Critical thinking about computers is not possible without an informed understanding. (...) Software as a whole is not only 'code' but a symbolic form involving cultural practices of its employment and appropriation. (...) It's upon critics to reflect on the contraints that computer control languages write into culture. — from: Language, by Florian Cramer; published in Software Studies, edited by Matthew Fuller (2008)

- in many cases these debates [about artificial intelligence] may be missing the real point of what it means to live and think with forms of synthactic intelligence very different from our own. (...) To regard A.I. as inhuman and machinic should enable a more reality-based understanding of ourselves, our situation, and a fuller and more complex understanding of what 'intelligence' is and is not. — from Benjamin Bratton, Outing A.I. Beyond the Turing Test (2015)

- Raw-data is like nature, it is the idea that nature will speak by itself. It is the idea that thanks to big data, the world speaks by itself without any transcription, symbolization, institutional mediation, political mediation or legal mediation — from: Antoinette Roivoy; during her lecture as part of the panel 'All Watched Over By Algorithms', held during the Transmediale festival (2015)

- The issue we are dealing now with in machine learning and big data is that a heuristic approach is being replaced by a certainty of mathematics. And underneath those logics of mathematics, we know there is no certainty, there is no causality. Correlation doesn't mean causality. — from: Colossal Data and Black Futures, lecture by Ramon Amaro; as part of the Impakt festival (2015)

publishing?

In the field of graphic design, i recognize a pattern of 'designing' on a high level. I call this 'high level design' because of the tradition to formulate design questions on the level of the interface and the interpretation of the reader. This is different in the field of computed type design, where fonts are built out of outlines, skeletons or dots; depending on their constructions. Also the field of experimental book design could be regarded as a exception. Though, in the field of web design, working on the level of the interface is very common, but could be avoided by regarding 'design' as designing a 'workflow'. That will lead to a design practise where questions regarding 'new media' and 'computation' could be considered as design questions. Design questions are then related to the use of tools (software), social workstructure, conservation, and principles regarding sharing and commercializing.**

During my bachelor in graphic design, i got educated in a quite traditional way: a focus on typography and visual language, combined with courses in editorial design. I became interested in semiotics, and in systems that use symbols/icons/indexes to construct meaning.

After my first year at the Piet Zwart, i feel that my interest shifts from designing information on an interface level, to designing information processes. Being fascinated by looking at inner workings of techniques and being affected by the 'free and open software' principles, bring up a whole set of new design questions. For example:

* How can an interface reveal its inner system? * And how could a workflow effect the information it is processing? * What to do with being dependent on an online services like WordPress, to publish? * Should we reconsider workflows that only can happen online? * How can documents not only be published but also conserved as readible documents? (as opposed to database entries that are stored in binary?) * When could a document be called 'a document'? When it is readable for the user or for the computer?

Existing techniques that already give answers to these questions are GIT and MediaWiki. I would like to include these questions in my graduation work, and work with these software packages.

→ (bit) more about this shift in looking at design is on this page

* A computer is a linguistic device. When using a computer, language is used (as interface to control computer systems), executed (as scripts written in (a set of) programming languages) and processed (turning natural language into data).

** derived from the following 'argument template': In the history of (...) i recognize a pattern of (...). This could be avoided by (...) because (...). Then it would be possible to (...).

publishing framework

framework

for who?

- people with an interest to regard software as a cultural product

with who?

my position?

how often?

#0 issue

- WordNet case-study

- ontologies / taxonomies / vocabularies like RDF, OWL, OpenCyc; (aiming at a semantic web & linked data)

- historical categorization work (Leibniz statistic's, Roget's thesaurus)

For the #0-issue i would like to look at 'WordNet'. WordNet is a lexical dataset and a primary resource in the field of Knowlegde Discovery in Data processes (also known as the field of data-mining and big-data). WordNet is built with word-'synsets' (where a word could have multiple entries according to multiple meanings), which are related to eachother by various relations, like: word-type, categorie or synonyms. This dataset has been developed since 1985, and is basically a norm in the field, used during training processes of data-mining algorithms. Although the focus on word-synsets is an attempt to create a nuanced model of a human language, the dataset is still a model, and will always be 'imperfect'.

Today, as written language is regarded as 'data', data-mining techniques 'read' written text to return certain 'information' or also called 'knowledge'. It's a constructive truth instead, and datasets as WordNet are forming the basis of such truths.

→ other elements i have been working on are documented on this page

planning for practical steps

- selecting a WordNet case study (to speak about a specific context)

- set the specific topic of #0; (approach towards WordNet)

- collecting related material

- ask around / contact specific researchers or writers that are working in the field

- prototype publishing formats (related to the structure of WordNet)

- mapping WordNet's structure

- using WordNet as a writing filter?

- WordNet as structure for a collection (similar to the way i've used the SUN database)

- start a collective platform (wiki?) to document the publications & related material

Thesis intention

I would like to integrate my thesis in my graduation project, to let my research become my thesis, and as well the content of the publication(s). This could take multiple forms, for example:

- interview with creators of datasets or lexicons like WordNet

- close reading of a piece of software, like we did during the workshop at Relearn. Options could be: text-mining software Pattern (Relearn), or Weka 3.0; or WordNet, ConceptNet, OpenCyc

Relation to previous practice

In the last year, i've been looking at different tools that process natural language. From speech-to-text software to text-mining tools, they all systemize language in various ways.

As a continutation of that i took part at the Relearn summerschool in Brussels last August (2015), to propose a work track in collaboration with Femke Snelting on the field of text-mining. With a group of people we have been trying to deconstruct the 'truth-construction' in a text-mining software package called 'Pattern'. We deconstructed the mathematical models that are used, finding moments where semantics are mixed with mathematics, and trying to grasp what kind of cultural context is created around this field. The workshop during Relearn transformed into a project that we called '#!Pattern+, which will be a critical fork of the latest version of Pattern, including reflections and notes on the software and the culture it is surrounded within. The README file that has been written for #!PATTERN+ is online here, and more information is collected on this wiki page.

Another entrance to understanding what happens in algorithmic practises such as machine learning, is by looking at training sets that are used to train algorithms to recognize certain patterns in a set of data. These training sets could contain a large set of images, texts, 3d models, or video's. By looking at such datasets, and more specifically at the choices that have been made in terms of structure and hierarchy, steps of the construction a certain 'truth' are revealed. For the exhibition "Encyclopedia of Media Object" in V2 last June, i created a catalog, voice over and booklet, which placed the objects from the exhibition within the framework of the SUN database, a resource of images for image recognition purposes. (link to the "i-will-tell-you-everything (my truth is a constructed truth" interface)

There are a few datasets in the academic world that seem to be basic resources to built these training sets upon. In the field they are called 'knowledge bases'. They live on a more abstract level then the training sets do, as they try to create a 'knowlegde system' that could function as a universal structure. Examples are WordNet (a lexical dataset), ConceptNet, and OpenCyc (an ontology dataset). In the last months i've been looking into WordNet, worked on a WordNet Tour (still ongoing), and made an alternative browser interface (with cgi) for WordNet. It's all a process that is not yet transformed in an object/product, but untill now documented here and here on the Piet Zwart wiki.

- ↑ 1.0 1.1 Using twitter to predict heart disease, Lyle Ungar at TEDxPenn; https://www.youtube.com/watch?v=FjibavNwOUI (June 2015)

- ↑ 2.0 2.1 Introducing the automatic Flickr tagging bot, on the Flickr Forums; https://www.flickr.com/help/forum/en-us/72157652019487118/ (May 2015)