User:Francg/expub/specialissue2/dev2: Difference between revisions

No edit summary |

No edit summary |

||

| Line 13: | Line 13: | ||

[[File:Ableton.png]] | [[File:Ableton.png]] | ||

<br> | |||

<br> | |||

<br> | |||

'''* * Meeting notes / Feedback * *''' | |||

- How can a body be represented into a score?<br> | |||

- "Biovision Hierarchy" = file format - motion detection.<br> | |||

- [http://thursdaynight.hetnieuweinstituut.nl/en/activities/femke-snelting-reads-biovision-hierarchy-standard Femke Snelting reads the Biovision Hierarchy Standard]<br> | |||

- Systems of notations and choreography - Johanna's thesis in the wiki<br> | |||

Raspberry Pi * | |||

- Floppy disk: contains a patch from Pd. | |||

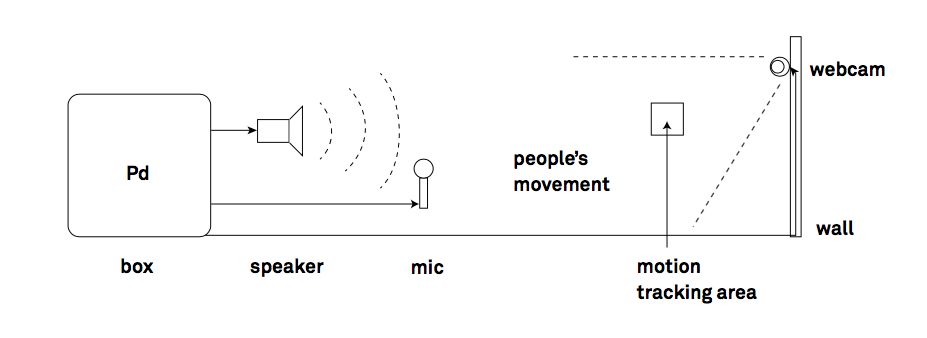

- Box: Floppy Drive, camera, mic... | |||

- Server: Documentation such as images, video, prototypes, resources... | |||

<br>- There are two different research paths that could be more interestingly further explored separately; <br><br> | |||

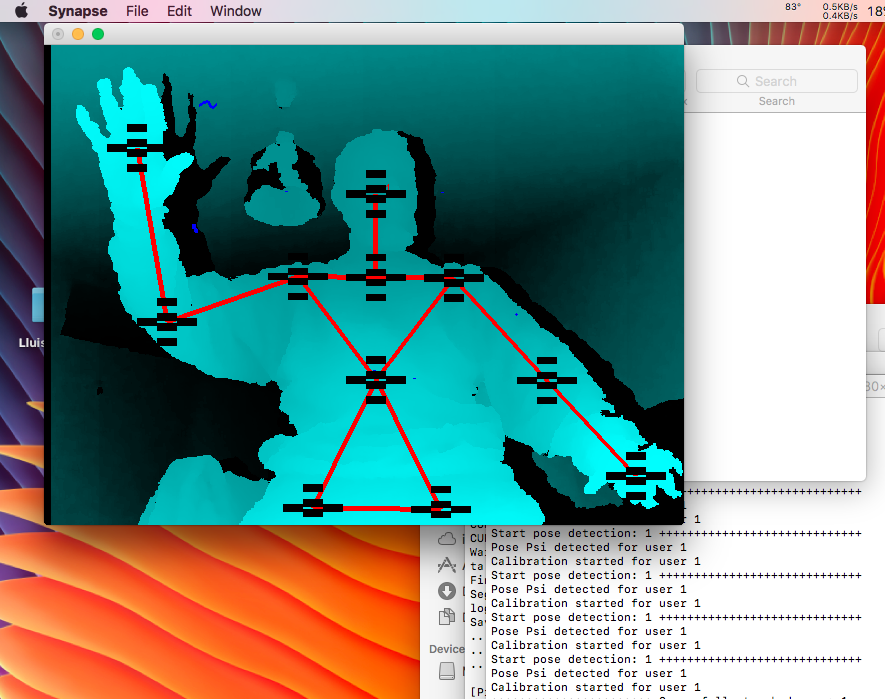

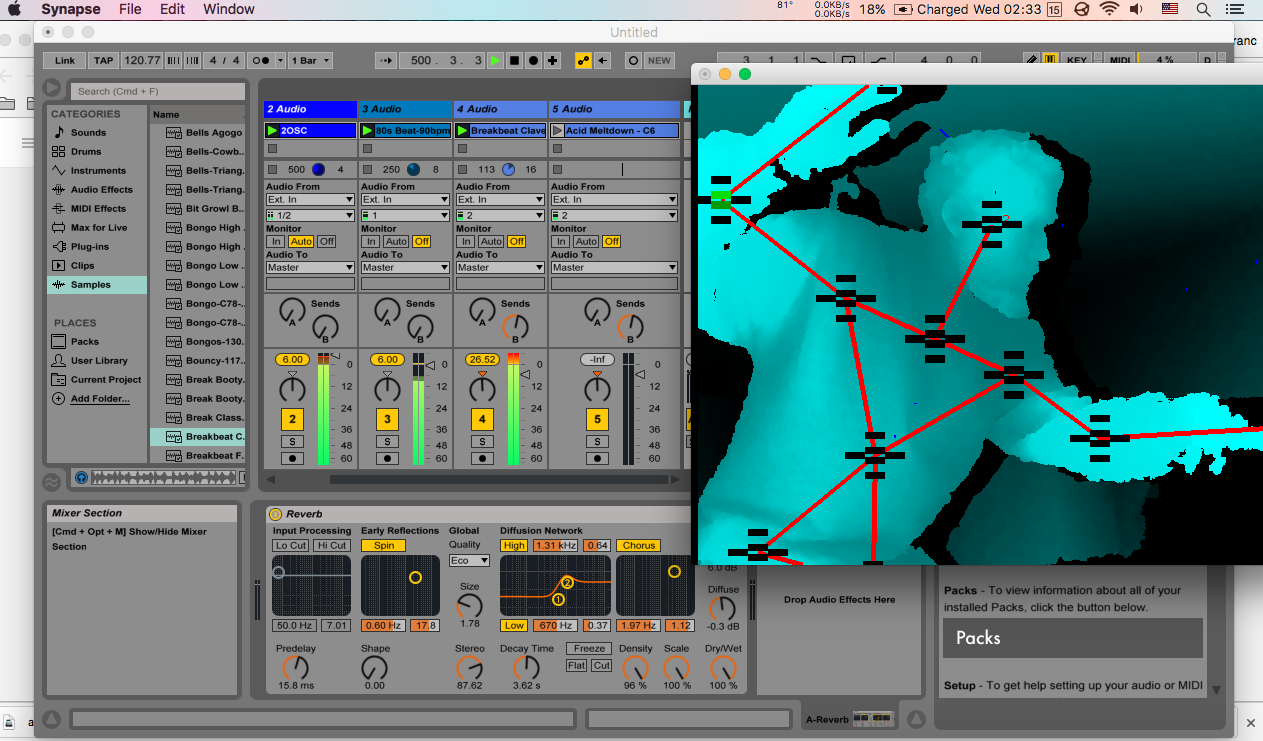

1 - on one hand, * motion capture * by employing tools/software like "Kinect", "Synapse" app, "Max MSP", "Ableton", etc... | |||

2 - on the other hand, there is data / information reading | |||

* This can be further developed and simplified. | |||

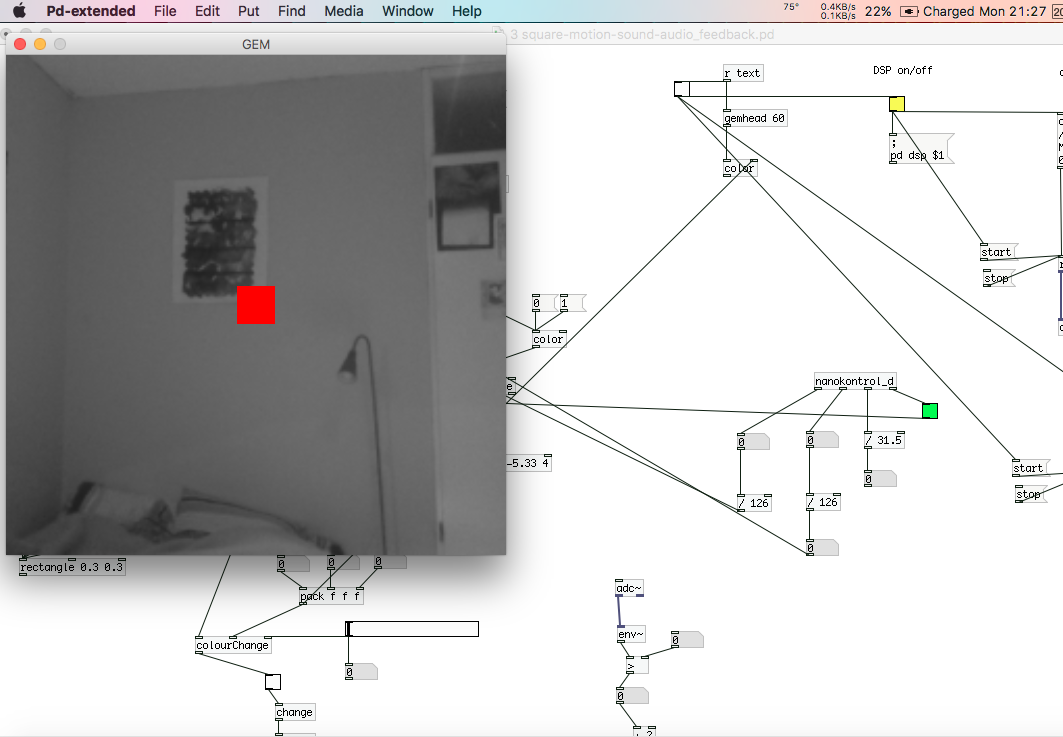

* However, motion capture using Pd and an ordinary webcam to make audio effects could be efficiently linked. | |||

<br> | <br> | ||

Revision as of 21:13, 1 March 2017

Synapse + Kinect

Synapse + Kinect + Ableton + Patches to merge and synnchronize Ableton's audio samples with the body limbs

* * Meeting notes / Feedback * *

- How can a body be represented into a score?

- "Biovision Hierarchy" = file format - motion detection.

- Femke Snelting reads the Biovision Hierarchy Standard

- Systems of notations and choreography - Johanna's thesis in the wiki

Raspberry Pi *

- Floppy disk: contains a patch from Pd.

- Box: Floppy Drive, camera, mic...

- Server: Documentation such as images, video, prototypes, resources...

- There are two different research paths that could be more interestingly further explored separately;

1 - on one hand, * motion capture * by employing tools/software like "Kinect", "Synapse" app, "Max MSP", "Ableton", etc...

2 - on the other hand, there is data / information reading * This can be further developed and simplified. * However, motion capture using Pd and an ordinary webcam to make audio effects could be efficiently linked.

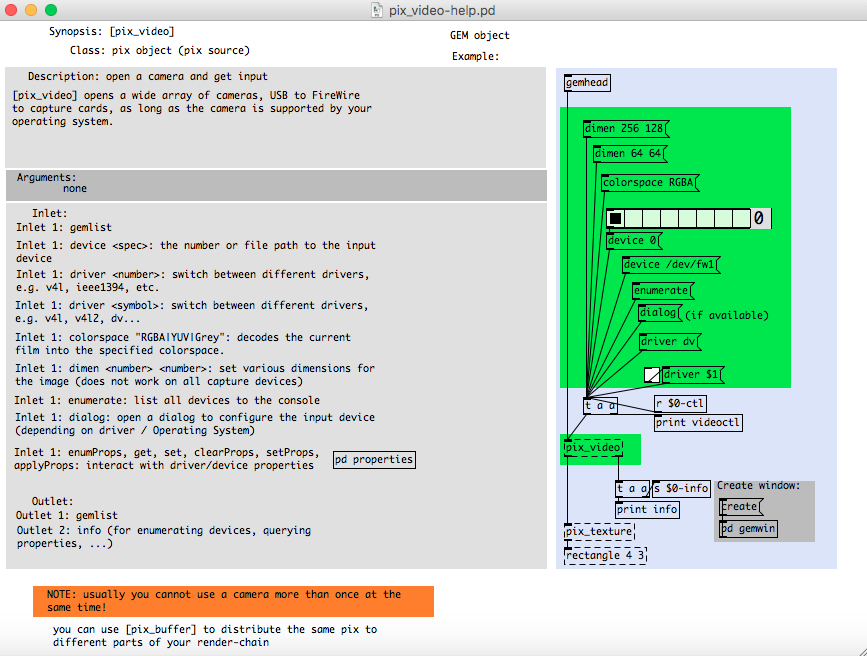

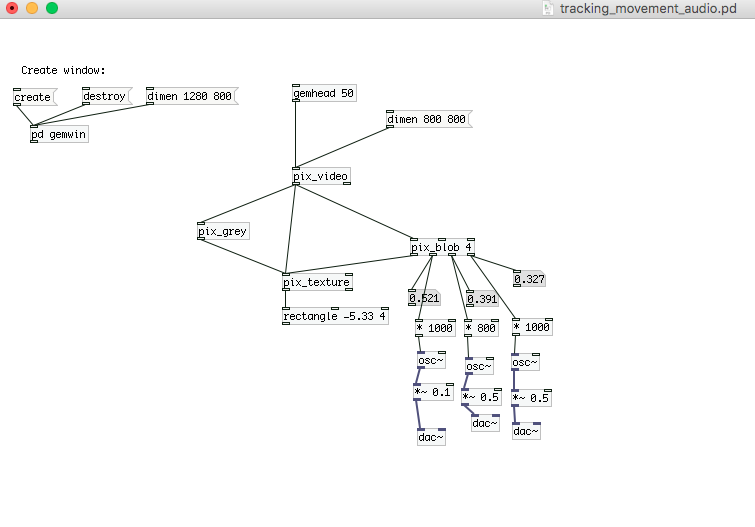

Detecting video input from my laptop's webcam in Pd

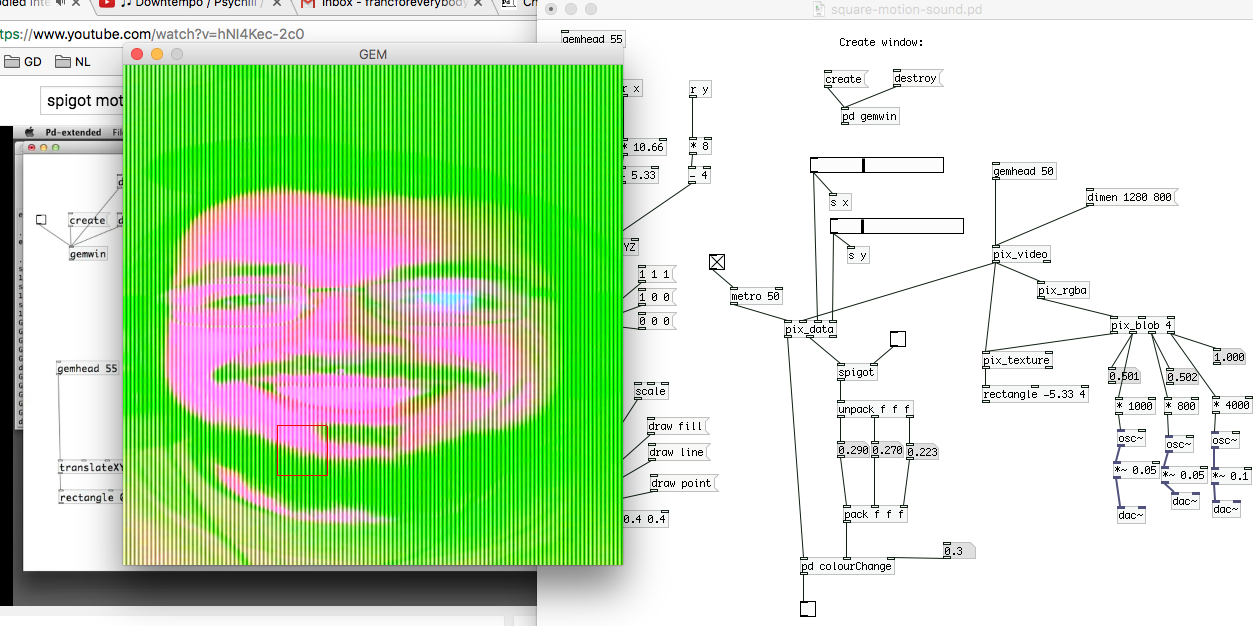

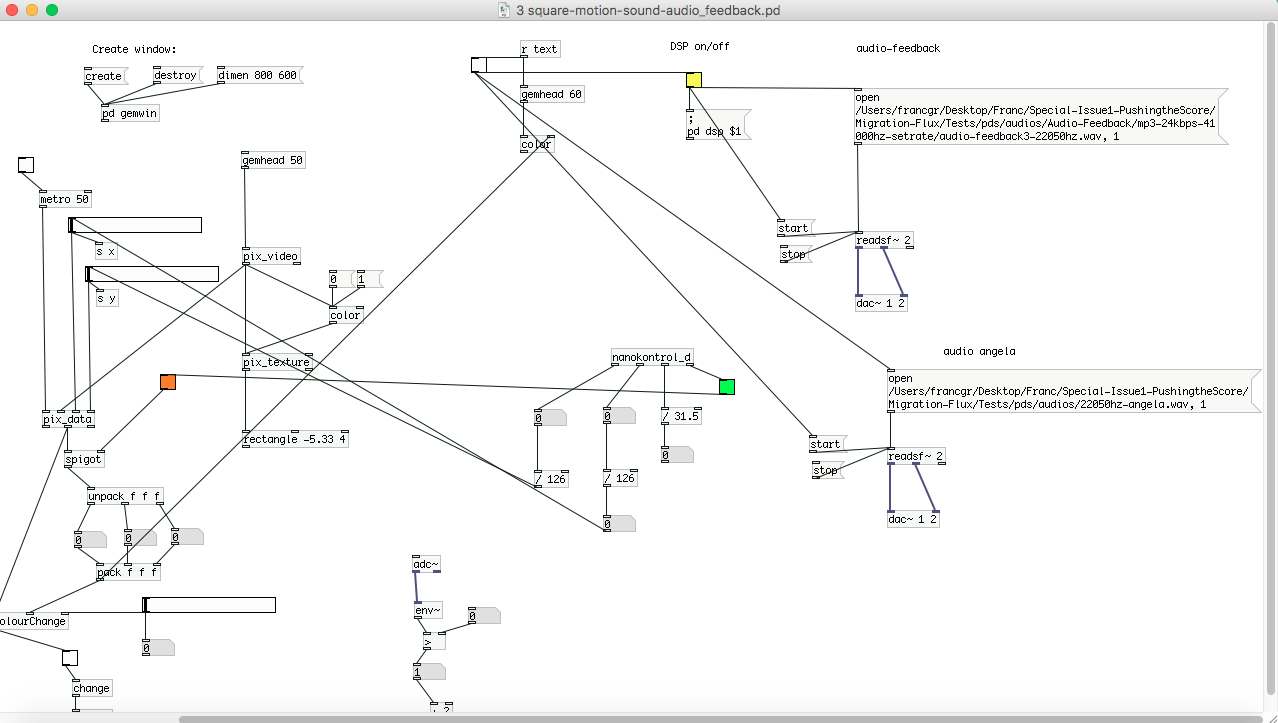

Motion Detection - “blob” object and oscillators

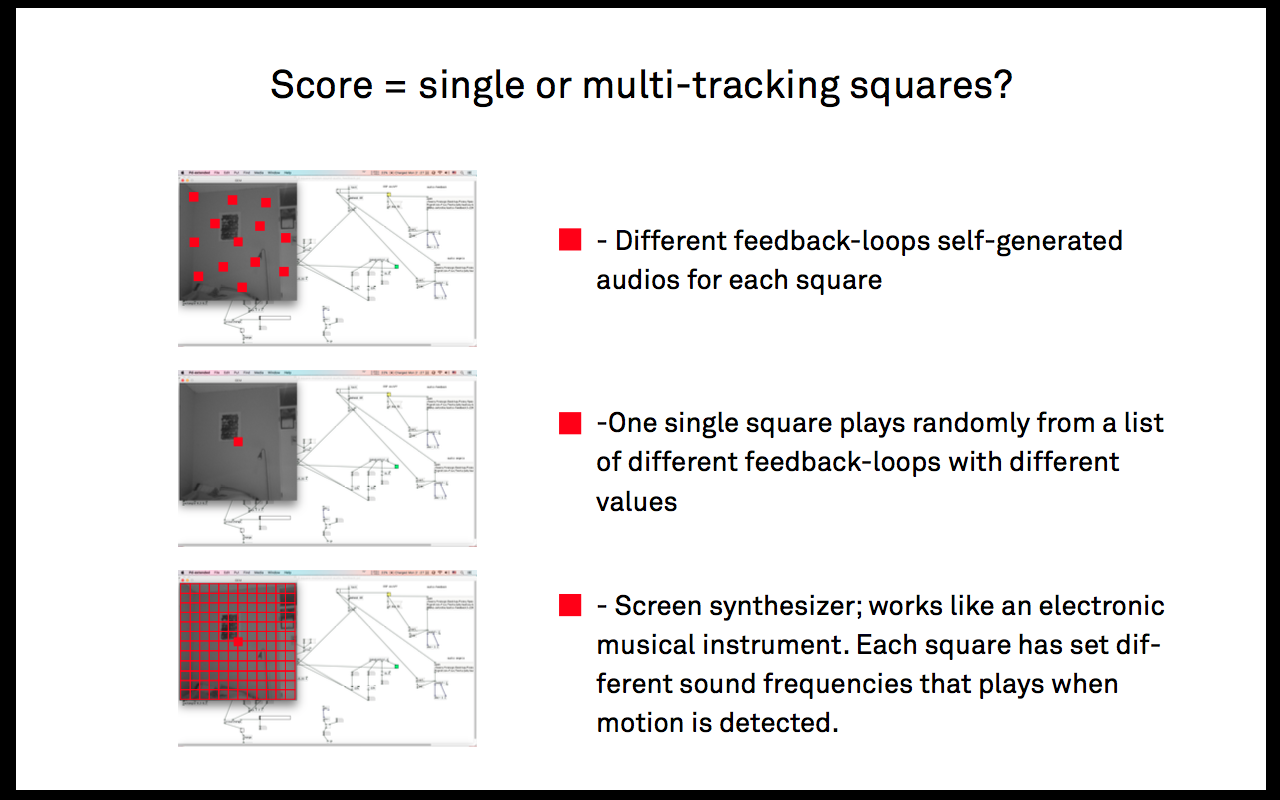

Color tracking inside square with “spigot” + “blob” object. This can be achieved in rgba or grey.

also by combining "grey" and "rgba" simultaneously, or any other screen noise effect.

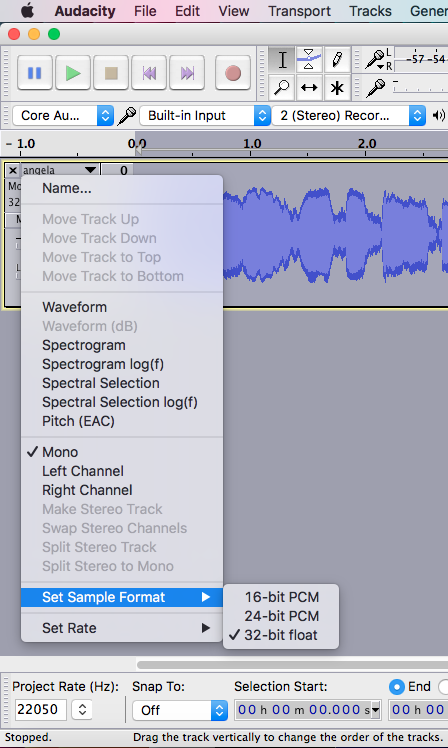

Same process can be performed with self-generated imported audio files. It's important to ensure that their sample rate is the same as in Pd's media/audio settings, in order to avoid errors.

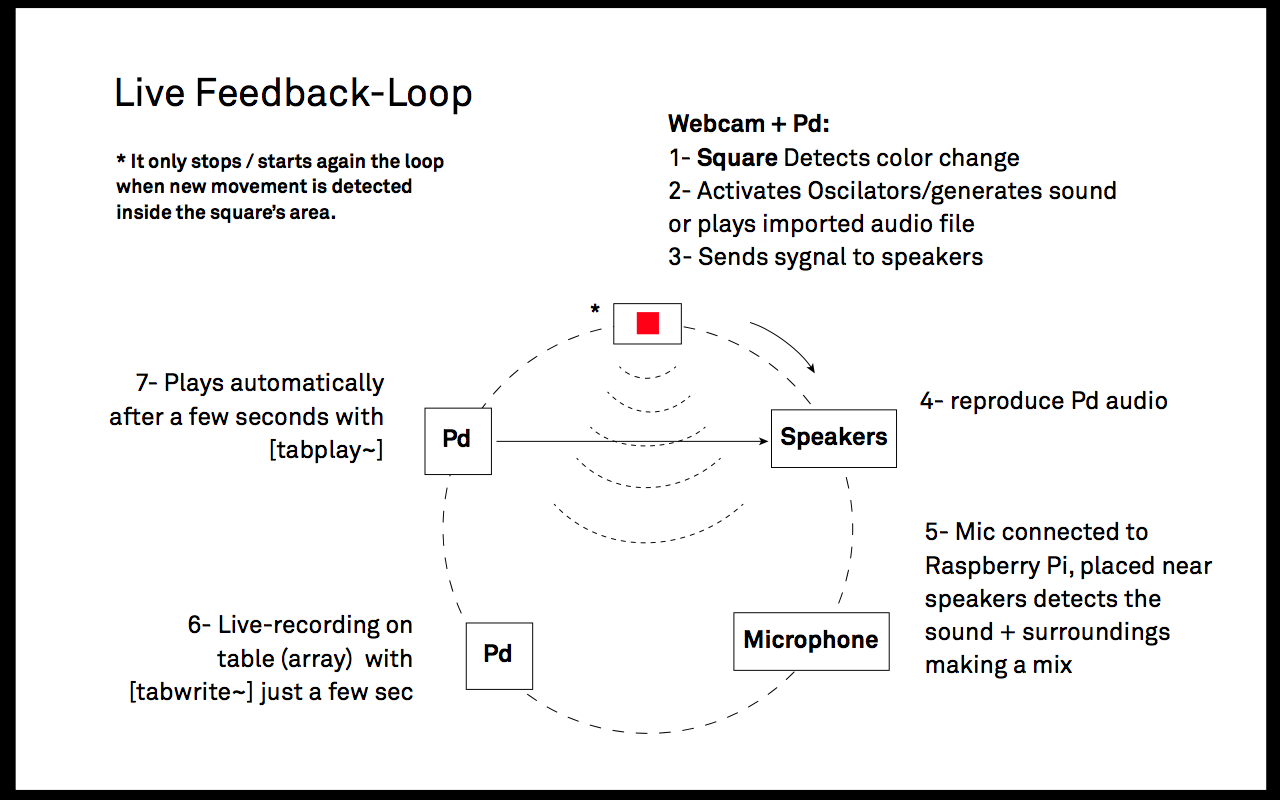

In order to better understand how audio feedback works, I have self-generated a series of feedback loops by "screen recording" my prototypes, using Quicktime and the microphone/s input, along with specific system audio settings. They were later edited in Audacity.

File:Audio1.ogg

File:Audio2.ogg

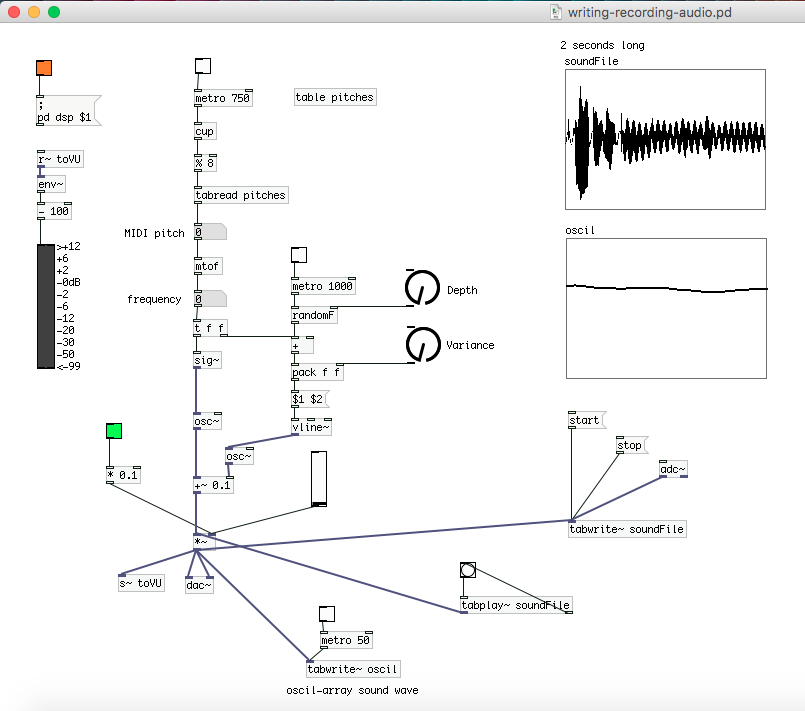

Audio recording + audio play by using [tabwrite~] and [tabplay~] objects. This allows to create a loop by recording multiple audios (which can also be overlapped depending on their length)

Feedback Loop = Score?

an alternative could be: