User:Manetta/thesis/thesis-outline: Difference between revisions

(→NLP) |

No edit summary |

||

| Line 9: | Line 9: | ||

===knowledge discovery in data (data-mining)=== | ===knowledge discovery in data (data-mining)=== | ||

For the occassion of the graduating project of this year, i would like to focus on the practise of text-mining, which is a subgroup of the so called field of 'data mining'. | For the occassion of the graduating project of this year, i would like to focus on the practise of text-mining, which is a subgroup of the so called field of 'data mining'. | ||

=title: i could have written that= | |||

Text mining is part of an analytical practise of searching for patterns in text following "A Data Driven Approach", and assigning these to (predefined) profiles. It is part of a bigger information-construction process (called: Knowledge Discovery in Data, KDD) which implies source-selection, data-creation, simplification, translation into vectors, and testing techniques. | |||

== context == | |||

Text mining is a political sensitive technology, closely related to surveillance and privacy discussions around 'big data'. The technique in the middle of tense discussions about capturing people's behavior for security reasons, but affect the privacy of a lot of people — accompanied by an unpleasant controlling force that seems to be omnipresent. After the disclosures of the NSA's data capturing program by Edward Snowden in 2013, a wider public became aware of the silent data collecting activities done by a governmental agency on for example [http://www.popularmechanics.com/military/a9465/nsa-data-mining-how-it-works-15910146/ phone-metadata]. The UK law made special exceptions in their copyright laws to make [https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/375954/Research.pdf text mining practises possible] on intellectual property for non-commercial use since October 2014. Problematic is the skewed balance between data-producer and data-analytics also framed as [http://papers.ssrn.com/sol3/papers.cfm?abstract_id=2709498 'data colonialism'], and the accompanied governmental-role that gives to data-analytics for example by construction your search-results-list according to your data-profile. | |||

The magical effects of text mining results, caused by the difficulty of understanding the construction of these results, makes it difficult to formulate an opinion about text mining techniques. It makes it even difficult to formulate what the problem exactly is, as many people are tending to agree with the calculations and word-counts that seemly are excecuted. "What is exactly the problem?", and "This is the data that speaks, right?", are questions that need to be challenged in order to have a conversation about text mining techniques at all. | |||

This thesis will attempt to show the subjectivity that is present in text mining software, by zooming into the construction process of the creation of so called 'vectors', a main element in the process where text and numbers meet. | |||

==hypothesis== | ==hypothesis== | ||

The results of data-mining software are not mined, results are constructed. <br> | The results of data-mining software are not mined, results are constructed. <br> | ||

== reading technique: a 'vector' == | |||

Data is seen as a material that easily can be extracted from the web, and regarded to be that little 'truth-snapshot' that is taken at a certain time and moment. In text mining, the material that is used as input are written pieces of texts transformed into data. The data consists of a word + number, a feature + weight, a key + value pair, or how a linguist would call it: a subject + predicate combination. This is the material of the building block where text mining outcomes are constructed with. Immateriality building blocks are: point-of-departures, source-selection, noise-reduction, and test-techniques. All these elements effect the vector. (In that way it could be understood as a cybernetic system of control.) | |||

A vector implies the moment of representing a word with a number. This number can represent a wordcount, ..., ...). | |||

== systemization of language == | |||

The systemization of language is needed to fullfill an aim of developing software that processes large amounts of text, and are able to 'read' their content. Where could this aim come from? The linguist Austin shows that language is merely a speech act, happening as a social act. In these speech acts, there is no such external objective meaning of the words we use in language. Heidegger even goes further, and says that while 'hammering' the person that hammers is not regarding the hammer in a reflective sense. The person is in the moment of using the hammer to achieve something. The only moment when the person would be confronted with a representational sense of the hammer, is when the hammer breaks down. It is at that moment that the person will learn a bit more about what a hammer 'is'. | |||

==project & thesis (merge)== | ==project & thesis (merge)== | ||

Revision as of 01:53, 3 February 2016

outline

intro

NLP

With 'i-could-have-written-that' i would like to look at technologies that process natural language (NLP). There is a range of different expectations of NLP systems, but a full-coverage of natural language is unlikely. By regarding NLP software as cultural objects, i'll focus on the inner workings of their technologies: what are the technical and social mechanisms that systemize our natural language in order for it to be understood by a computer?

NLP is a category of software packages that is concerned with the interaction between human language and machine language. NLP is mainly present in the field of computer science, artificial intelligence and computational linguistics. On a daily basis people deal with services that contain NLP techniques: translation engines, search engines, speech recognition, auto-correction, chatbots, OCR (optical character recognition), license plate detection, data-mining. For 'i-could-have-written-that', i would like to place NLP software central, not only as technology but also as a cultural object, to reveal in which way NLP software is constructed to understand human language, and what side-effects these techniques have.

knowledge discovery in data (data-mining)

For the occassion of the graduating project of this year, i would like to focus on the practise of text-mining, which is a subgroup of the so called field of 'data mining'.

title: i could have written that

Text mining is part of an analytical practise of searching for patterns in text following "A Data Driven Approach", and assigning these to (predefined) profiles. It is part of a bigger information-construction process (called: Knowledge Discovery in Data, KDD) which implies source-selection, data-creation, simplification, translation into vectors, and testing techniques.

context

Text mining is a political sensitive technology, closely related to surveillance and privacy discussions around 'big data'. The technique in the middle of tense discussions about capturing people's behavior for security reasons, but affect the privacy of a lot of people — accompanied by an unpleasant controlling force that seems to be omnipresent. After the disclosures of the NSA's data capturing program by Edward Snowden in 2013, a wider public became aware of the silent data collecting activities done by a governmental agency on for example phone-metadata. The UK law made special exceptions in their copyright laws to make text mining practises possible on intellectual property for non-commercial use since October 2014. Problematic is the skewed balance between data-producer and data-analytics also framed as 'data colonialism', and the accompanied governmental-role that gives to data-analytics for example by construction your search-results-list according to your data-profile.

The magical effects of text mining results, caused by the difficulty of understanding the construction of these results, makes it difficult to formulate an opinion about text mining techniques. It makes it even difficult to formulate what the problem exactly is, as many people are tending to agree with the calculations and word-counts that seemly are excecuted. "What is exactly the problem?", and "This is the data that speaks, right?", are questions that need to be challenged in order to have a conversation about text mining techniques at all.

This thesis will attempt to show the subjectivity that is present in text mining software, by zooming into the construction process of the creation of so called 'vectors', a main element in the process where text and numbers meet.

hypothesis

The results of data-mining software are not mined, results are constructed.

reading technique: a 'vector'

Data is seen as a material that easily can be extracted from the web, and regarded to be that little 'truth-snapshot' that is taken at a certain time and moment. In text mining, the material that is used as input are written pieces of texts transformed into data. The data consists of a word + number, a feature + weight, a key + value pair, or how a linguist would call it: a subject + predicate combination. This is the material of the building block where text mining outcomes are constructed with. Immateriality building blocks are: point-of-departures, source-selection, noise-reduction, and test-techniques. All these elements effect the vector. (In that way it could be understood as a cybernetic system of control.)

A vector implies the moment of representing a word with a number. This number can represent a wordcount, ..., ...).

systemization of language

The systemization of language is needed to fullfill an aim of developing software that processes large amounts of text, and are able to 'read' their content. Where could this aim come from? The linguist Austin shows that language is merely a speech act, happening as a social act. In these speech acts, there is no such external objective meaning of the words we use in language. Heidegger even goes further, and says that while 'hammering' the person that hammers is not regarding the hammer in a reflective sense. The person is in the moment of using the hammer to achieve something. The only moment when the person would be confronted with a representational sense of the hammer, is when the hammer breaks down. It is at that moment that the person will learn a bit more about what a hammer 'is'.

project & thesis (merge)

voice: accessible for a wider public

needed: problem formulations that connect with day-to-day life

As 'i-could-have-written-that' is driven by textual research, it would feel quite natural to merge the practical and written (reflective) elements of the graduation procedure into one project. Also, as the eventual format i have in mind at the moment is a publication series, that could bring the two together. Next to written reflections on the hypothesis of constructed results, i would like to work on hands-on prototypes with text-mining software.

As a work method, i would like to isolate and analyse different data-mining elements to test the hypothesis on. The elements selected so far focus on: terminology (metaphors + history), software (data construction + ... ), and presentation of results.

data mining elements

- terminology ('mining', 'data')

- 'mining' → from 'mining' minerals to 'mining' data; (wiki-page)

- 'data mining' & mining natural resrouces

- 'data mining' & archeology

- 'data mining' & writing

- 'data' → data as autonomous entity; from: information, to: data science

- 'mining' → from 'mining' minerals to 'mining' data; (wiki-page)

- text-processing

- from: able to check results with senses (OCR), to: intuition (data-mining)

- parsing, how text is treated: as n-grams, chunks, bag-of-words, characters

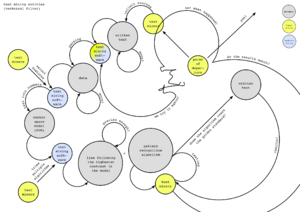

- workflow mining-software (eg. Pattern, Weka); (software workflow diagram) & circularity

- Knowledge Discovery in Data (KDD) workflow & circularity

- prototype: how different aims 'read' the data according to their perspective ... (recognizing patterns in a game of chance)

- presentation of results

theory

- solutionism & techno optimism

- big-data, machine learning & data-mining criticism

research material

→ filesystem interface, collecting research related material (+ about the workflow)

→ wikipage for 'i-could-have-written-that' (list of prototypes & inquiries)

→ little glossary

mining as ideology

* from mining minerals to mining data

anthropomorphism

* anthropomorphic qualities of a computer (?)

* the photographic apparatus → the data apparatus (annotations)

* Joseph's (Weizenbaum) questions on Computer Power and Human Reason

text processing

* semantic math: averaging polarity rates in Pattern (text mining software package)

* notes on wordclouds

* automatic reading machines; from encoding-decoding to constructed-truths

* index of WordNet 3.0 (2006)

data as autonomous entity

* knowledge driven by data - whenever i fire a linguist, the results improve

other

* (laughter) - it's embarrassing but these are the words

* call for a syntactic view; Florian Cramer & Benjamin Bratton (text)

* EUR PhD presentation 'Sentiment Analysis of Text Guided by Semantics and Structure' (13-11-2015)

* index of Roget's thesaurus (1805)

* comparing the classification of the word 'information' Thesaurus (1911) vs. WordNet 3.0 (2006)

annotations

- Alan Turing - Computing Machinery and Intelligence (1936)

- The Journal of Typographic Research - OCR-B: A Standardized Character for Optical Recognition this article (V1N2) (1967); → abstract

- Ted Nelson - Computer Lib & Dream Machines (1974);

- Joseph Weizenbaum - Computer Power and Human Reason (1976); → annotations

- Water J. Ong - Orality and Literacy (1982);

- Vilem Flusser - Towards a Philosophy of Photography (1983); → annotations

- Christiane Fellbaum - WordNet, an Electronic Lexical Database (1998);

- Charles Petzold - Code, the hidden languages and inner structures of computer hardware and software (2000); → annotations

- John Hopcroft, Rajeev Motwani, Jeffrey Ullman - Introduction to Automata Theory, Languages, and Computation (2001);

- James Gleick - The Information, a History, a Theory, a Flood (2008); → annotations

- Matthew Fuller - Software Studies. A lexicon (2008);

- Language, Florian Cramer; → annotations

- Algorithm, Andrew Goffey;

- Marissa Meyer - the physics of data, lecture (2009); → annotations

- Matthew Fuller & Andrew Goffey - Evil Media (2012); → annotations

- Antoinette Rouvroy - All Watched Over By Algorithms - Transmediale (Jan. 2015); → annotations

- Benjamin Bratton - Outing A.I., Beyond the Turing test (Feb. 2015) → annotations

- Ramon Amaro - Colossal Data and Black Futures, lecture (Okt. 2015); → annotations

- Benjamin Bratton - On A.I. and Cities : Platform Design, Algorithmic Perception, and Urban Geopolitics (Nov. 2015);

bibliography (five key texts)

- Vilem Flusser - Towards a Philosophy of Photography (1983); → annotations

- Language, Florian Cramer (2008); → annotations

- Antoinette Rouvroy - All Watched Over By Algorithms - Transmediale (Jan. 2015); → annotations

- The Journal of Typographic Research - OCR-B: A Standardized Character for Optical Recognition this article (V1N2) (1967); → abstract