Sniff, Scrape, Crawl (Thematic Project): Difference between revisions

| Line 30: | Line 30: | ||

* Create generic user account on non discriminated machine, following [[Create a new user account on your GNU/Linux machine| these instructions]] | * Create generic user account on non discriminated machine, following [[Create a new user account on your GNU/Linux machine| these instructions]] | ||

* tunnel traffic through this machine [[Tunnel your HTTP traffic with ssh | with this trick]] | * tunnel traffic through this machine [[Tunnel your HTTP traffic with ssh | with this trick]] | ||

* | * Sniff requested URL on the proxy machine with [[Sniff HTTP traffic on your machine | urlsnarf]] | ||

* TraceRoute & DNS | * TraceRoute & DNS | ||

Revision as of 13:02, 15 January 2011

'Sniff, Scrape, Crawl…

Trimester 2, Jan.-March 2011

Thematic Project Tutors: Aymeric Mansoux, Michael Murtaugh, Renee Turner

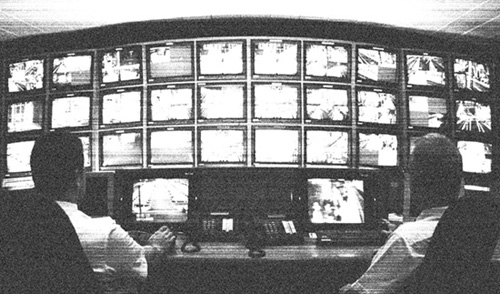

Our society is one not of spectacle, but of surveillance…

Michel Foucault, Discipline and Punish: The Birth of the Prison, trans. Alan Sheridan (New York, 1979), p. 217

We are living in an age of unprecedented surveillance. But unlike the ominous specter of Orwell’s Big Brother, where power is clearly defined and always palpable, today’s methods of information gathering are much more subtle and woven into the fabric of our everyday life. Through the use of seemingly innocuous algorithms Amazon tells us which books we might like, our trusted browser tracks our searches and Last.fm connects us with people who have similar tastes in music. Immersed in social media, we commit to legally binding contracts by agreeing to ‘terms of use’. Having made the pact, we Twitter our subjective realities in less than 140 characters, wish dear friends happy birthday on facebook and mobile-upload our geotagged videos on youtube.

Where once surveillance technologies belonged to governmental agencies, the web has added another less optically-driven means of both monitoring and monetizing our lived experiences. As the line between public and private has become more blurred and the desire for convenience ever greater, our personal data has become a prized commodity upon which industries thrive. Perversely, we have become consumers who simultaneously produce the product through our own consumption.

Sniff, Scrape, Crawl… is a thematic project examining how surveillance and data-mining technologies shape and influence our lives, and what consequences they have on our civil liberties. We will look at the complexities of sharing information in exchange for waiving privacy rights. Next to this, we will look at how our fundamental understanding of private life has changed as public display has become more pervasive through social networks. Bringing together practical exercises, theoretical readings and a series of guest lectures, Sniff, Scrape, Crawl… will attempt to map the data trails we leave behind and look critically at the buoyant industries that track and commodify our personal information.

References: Michel Foucault, Discipline and Punish: The Birth of the Prison, trans. Alan Sheridan (New York, 1979) Wendy Hui Kyong Chun, Control and Freedom: power and paranoia in the age of fiber optics, (London, 2006)

Note: This Thematic Project will be organized and taught by Aymeric Mansoux, Michael Murtaugh, Renee Turner and will involve a series of related guest lectures and presentations.

Workshop 1: 20-21 Jan, 2011

Day 1 Morning: SNIFF, Web 0.0

- The joy of basic client/server Netcat Chat

- Internet geography http://hulu.com

- Connect to pzwart3 "geoip" script with netcat like this TODO: put script on server, make a recipe and link it here

- Repeat the same with a browser and witness "Software sorting"

- Create generic user account on non discriminated machine, following these instructions

- tunnel traffic through this machine with this trick

- Sniff requested URL on the proxy machine with urlsnarf

- TraceRoute & DNS

- http://www.visualcomplexity.com/vc/project.cfm?id=332

- http://laramies.blogspot.com/2006/05/2d-and-3d-traceroute-with-scapy.html

- VPN

- ssh, ports, IP addresses, protocols, DNS

Explain the code... (Show CGI scripts)

Day 1 Afternoon: SCRAPE, Web 1.0 / Simple Spider

Start with ipython. Getting documentation from ipython Using urllib, open a connection to a page (connect) Scan for images in a page. Loop

- Do everything by hand....

- Make Script, put in bin

(how to )

Open connections, interrogate the response, (content_type / length / file), HTML5lib parsing...

- HTTP, URL, CSS, get vs. post, query strings, urlencoding

Day 2 Morning: BRAZIL Web 2.0 / API

- Movement from the "surface scrape" to the database structure of a web 2.0 service.

- Goal: Apply the same spider script -- now using connections available only via the API.

- XML, JSON, RSS

Day 2 Afternoon: DIY CRAWLing with Django

slowly but steadily, a new structure emerges.

Mapping JSON/API structure to a database. Code to map source to db. Using Django admin to visualize data.

Creating views...

>> assignment in teams: for instance:

- get a list of tags from a userid

Building a web crawler with Django

Assignment

Case Study: Naked on Pluto (example of movement from survey to strategy)

Assignment is:

- selection of a particular "service"

- Visualisation / Map of a "unit" of data from said service

- Plan for a tactical database to make use of crawled data from the service.

(could be what if scenarios based on the structure of the data, what kind of connections might you make

exploring/fantasizin about possibilities *grounded in data*