User:Manetta/thesis/chapter-1

i could have written that - chapter 1

1.0 - text mining as technology → culture included

Text mining involved NLP techniques in order to perform as an effective analytical system. By processing written natural language, the technology aims to derive information from a large set of written text.

Text mining is a political sensitive technology, closely related to surveillance and privacy discussions around 'big data'. The technique in the middle of tense discussions about capturing people's behavior for security reasons, but affect the privacy of a lot of people — accompanied by an unpleasant controlling force that seems to be omnipresent. After the disclosures of the NSA's data capturing program by Edward Snowden in 2013, a wider public became aware of the silent data collecting activities done by a governmental agency on for example phone-metadata. The UK legislation made special exceptions in their copyright laws to make text mining practises possible on intellectual property for non-commercial use since October 2014. Problematic is the skewed balance between data-producer and data-analytics also framed as 'data colonialism', and the accompanied governmental-role that gives to data-analytics for example by construction your search-results-list according to your data-profile.

questions

- If text mining is regarded to be a writing system, what and where does it write?

- What are the levels of construction in text mining software culture?

- By considering text mining technology as a reading machine?

- How does the metaphor of 'mining' effect the process?

- How much can be based on a cultural side-product (like the text that is commonly used, as it is extracted from ie. social media)?

- What are the levels of construction in text mining software culture?

text mining as reading machine

The magical effects of text mining results, caused by the hidden presence of data analytics and multi-layered complexity of text mining software, makes it difficult to formulate an opinion about text mining techniques. As the text mining technology is an analytical process, it is often understood as a 'reading' machine.

- 'reading' connotations?

(A short note here on the use of written text as source material. As Vilem Flusser discussed in his essay 'Towards a Philosophy of Photography' in 1983: “images are not 'denotative' (unambiguous), but 'connotative' (ambiguous) → complexes of symbols, providing space for connotation”. There is much more to say here, but in terms of a short note, text (and data) is not 'denotative' (unambiguous), but 'connotative' (ambiguous). Full of complexes of symbols, providing space for connotation.)

- 'machine' connotations?

..., Weizenbaum, about 'machine'

If text mining software is regarded as a reading system, it makes it even difficult to formulate what the problem exactly is. Many people are tending to agree with the calculations and word-counts that come out of the software. "What is exactly the problem?", and "This is the data that speaks, right?" are questions that need to be challenged in order to have a conversation about text mining techniques at all. These are examples of what I would like to call 'algorithmic agreeability'.

'mining' data implies direct non-mediated access to the source

mining metaphor

The term 'Data mining' is a fashionable buzzword that is used to speak about the practice of data-analytics. Data mining techniques are nothing new, they are around already since the 60s. (??? reference!) But the term became more fashionable since the increasing amounts of data that are published online, and made accessible for data analytics in one way or the other: a phenomenon that has been called 'big data'. (??? reference!) Applications vary from predicting an author's age, to predict how costumer's feel about a brand or product.

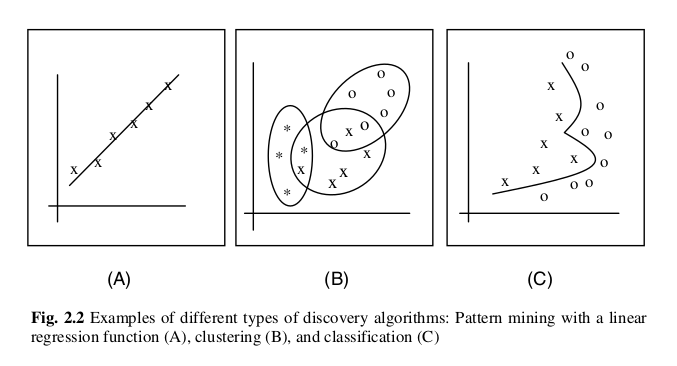

Though the term 'data mining' is actually not very accurate. When calling data-analysis software 'data mining software' is actually misleading. First, 'data' is actually not the object that a 'data mining' process is looking for. While processing data, a 'miner' rather looks for patterns that occur. Would 'pattern mining' a more accurate term to use?

The term contains the metaphor of 'mining', and it hints that the software is extracting information directly from the data. It implies that there is no mediating layer in between the pool of texts and the information that roles out of the software. Even if it is not 'data' that is mined for, also 'patterns' do not suddenly appear out of the big pool of data items. We will later look into a text mining workflow, and look at the steps that are effecting the outcomes. Using the 'mining' metaphor, leads to:

- no human responsibility

- outcomes regarded to be objective

- “it's the data that speaks”

KDD steps (Knowledge Discovery in Data)

As 'mining' is not a very accurate description for the technology that analyses text, it is helpful to look at a term used in the academic field: 'Knowledge Discovery in Data' (KDD) (Custers ed. 2013). Here, 'data mining' is only one step of the five in total, and only can be performed when the other are executed as well.

step 1 --> data collection step 2 --> data preparation step 3 --> data mining step 4 --> interpretation step 5 --> determine actions

To this list, I would like to add a few extra points, and rename the steps:

* step 0 --> deciding on point of view

step 1 --> text collection

step 2 --> text preparation

step 3 --> construction of data

* step 3a --> creating a vector space

(turning words into numbers)

* step 3b --> creating a model

(searching for contrast in the graph)

step 4 --> interpretation

step 5 --> determine actions

step 1,2,3,4,5 from: Data Mining and Profiling in Large Databases, Bart Custers, Toon Calders, Bart Schermer, and Tal Zarsky (Eds.) (2013) + step 0, 3a, 3b from: #!PATTERN+ project

Step 0 highlights the subjective process of deciding with which datasets to work. For example: The World Well Being Project asked 66.000 Facebook users to share their messages with them, in order to investigate is the writing style on that platform could reveal something about the psychological profile that the users seemed to belong to.

Later I will zoom in on 'grey' moments of text mining software. Most of that moments fall under step 3, the moment of data mining. By making a distinction between the moment that written text is transformed into numbers (3a) and the moment where the 'model' is created (3b), offers some clarity that will help to look at these actions individually.

text mining with a cultural side-product

Data is rather derived from written blogposts, tweets, news articles, wikipedia articles, Facebook messages and many other sources. When these media formats are created, a writer is concentrating on formulating a sentence and getting a message across. It is John that tries to tell his Twitter followers that the latest game show of the BBC is much less interesting this season. John is not consciously creating data. It's a side product, a product of the current media culture that happens in public space.

1.1 – Pattern's* gray spots

(*) Pattern is a text mining software package that includes all the steps mentioned above as KDD. The software is written and developed at the university of Antwerp as part of CliPS, a research center working in the field of Computational Linguistics & Psycholinguistics. It is a basic toolkit that includes the main 'mining' tools (like text-crawlers, text-parsers, machine learning tools and visualization scripts).

questions

- What are the levels of construction in text mining software itself?

- What gray spots appear when text is processed?

- What is meant by 'grayness'? How can it be used as an approach to software critique?

- Text processing: how does written text transform into data?

- Bag-of-words, or 'document'

- 'count' → 'weight'

- trial-and-error, modeling the line

- Testing process

- how is an algorithm actually only 'right' according to that particular test-set, with its own particularities and exceptions?

- loops to improve your outcomes

- What gray spots appear when text is processed?

idea of 'greyness'?

...

(Fuller & Goffey, 2012)

bag-of-words, or 'document'

written text ←→ text mining ←→ information The simple act of counting words in a document is the very first act of processing text into numbers. Could the text be called data from now on? Data as a format of written text that is countable and processable for the computer software? The text is now 'ordered', or at least in computational terms.

>>> document = Document("a black cat and a white cat", stopwords=True)

>>> print document.words

{u'a': 2, u'and': 1, u'white': 1, u'black': 1, u'cat': 2}

example of bag-of-words tool, source: pattern-2.6/examples/05-vector/01-document.py

For the computer, language is nothing more than a 'bag-of-words'. (Murtaugh, 2016). All meaning of the sentences is dropped. Also, all connection between words is gone. What stays is a word-order of most common used words. Order is discarded, and words are connected to numbers to make the text 'digestible' for a computer system. The name of a 'bag-of-words' brings up an image of a huge bag containing piles of different heights of the same words. A top-10 of most common words could now already give insight in what topics are present in a text. Pattern wrapped this technique in the 'document module'. This raises a confusing double use of the term 'document'. While the counted text has been extracted from a document itself (either a blog post, tweet or essay), here the bag-of-words set is called a document again, as if nothing actually happened and we still look at the actual source.

'count' → 'weight'

Soon after the first (brutal....) act of 'bagging' text into word-counts, the journey towards 'meaningful text' is starting again. To compare how similar two documents are (in terms of word-use), 'weight' is introduced. It normalizes term frequency, by counting the weight of each word in relation to the total amount of words in that document.

Document.words stores a dict of (word, count)-items. Document.vector stores a dict of (word, weight)-items, where weight is the term frequency normalized (0.0-1.0) to remove document length bias.

description of a 'document' in Pattern's source code, source: pattern-2.6/pattern/vector/__init__.py

>>> document = Document("a black cat and a white cat", stopwords=True)

>>> print document.vector.features

>>> for feature, weight in document.vector.items():

>>> print feature, weight

[u'a', u'and', u'white', u'black', u'cat']

a 0.285714285714

and 0.142857142857

white 0.142857142857

black 0.142857142857

cat 0.285714285714

example to show word-weights, source: pattern-2.6/examples/05-vector/01-document.py

trial-and-error, modeling the line

(description of how a mining process tries many pattern recognition algorithms, to find the most 'effective')

Once the line has been drawn, you can throw all the data-points away, because you have a model this is the moment of truth construction.

source: Data Mining and Profiling in Large Databases, Bart Custers, Toon Calders, Bart Schermer, and Tal Zarsky (Eds.) (2013)

testing data

how is an algorithm actually only 'right' according to that particular test-set, with its own particularities and exceptions?

Option to look at testing techniques, like 'golden standard', 80%/20%, and more....

# The only way to really know if you're classifier is working correctly # is to test it with testing data, see the documentation for Classifier.test().

comment written in Pattern's KNN example, source: pattern-2.6/examples/05-vector/04-knn.py

loops to improve your outcomes

the threshold of positivity can be lowered or raised

# The positive() function returns True if the string's polarity >= threshold.

# The threshold can be lowered or raised, but overall for strings with multiple

# words +0.1 yields the best results.

>>> print "good:", positive("good", threshold=0.1)

>>> print " bad:", positive("bad")

comment written in Pattern's KNN example, source: pattern-2.6/examples/03-en/07-sentiment.py

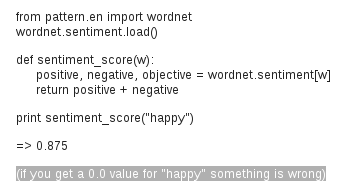

if you get a 0.0 value for “happy” something is wrong

answer by Tom de Smedt on CliPS's Google Groups about the sentiment_score() function, source: https://groups.google.com/forum/#!topic/pattern-for-python/FTeqb0p5eFM

other 'flags':

averaging polarity

<word form="amazing" wordnet_id="a-01282510" pos="JJ" sense="inspiring awe or admiration or wonder" polarity="0.8" subjectivity="1.0" intensity="1.0" confidence="0.9" />

<word form="amazing" wordnet_id="a-02359789" pos="JJ" sense="surprising greatly" polarity="0.4" subjectivity="0.8" intensity="1.0" confidence="0.9" />

items of an annotated adjectives wordlist, source: pattern-2.6/pattern/text/en/en.sentiment.xml

>>> print word, sentiment("amazing")

amazing (0.6000000000000001, 0.9)

example script, source: pattern-2.6/examples/03-en/07-sentiment.py

annotating subjectivity

<word form="haha" wordnet_id="" pos="UH" polarity="0.2" subjectivity="0.3" intensity="1.0" confidence="0.9" />

<word form="hahaha" wordnet_id="" pos="UH" polarity="0.2" subjectivity="0.4" intensity="1.0" confidence="0.9" />

<word form="hahahaha" wordnet_id="" pos="UH" polarity="0.2" subjectivity="0.5" intensity="1.0" confidence="0.9" />

<word form="hahahahaha" wordnet_id="" pos="UH" polarity="0.2" subjectivity="0.6" intensity="1.0" confidence="0.9" />

items of an annotated adjectives wordlist, source: pattern-2.6/pattern/text/en/en.sentiment.xml

1.2 – text mining applications

applications of text mining

of Pattern, Weka, and the World Well Being Project → listed here

Showing that text mining has been applied across very different field, and thereby seeming to be a sort of 'holy grail', solving a lot of problems.

(i'm not sure if this is needed)

links