User:Ruben/Prototyping/Sound and Voice

< User:Ruben | Prototyping

A project using voice recognition (Pocketsphinx) with Python. [1]

This script has undergone many iterations.

The first version merely extracted the spoken pieces.

The second version created an ugly graph to show how many was spoken in a certain part of a film (according to speech recognitions, which often detects things which are not there)

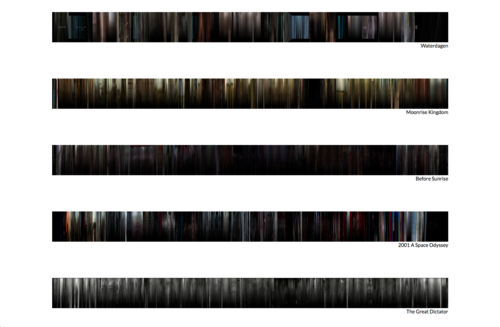

A third version could detect the spoken language using Pocketsphinx. Then it used ffmpeg and imagemagick to extract frames from the film, which are appended into a single image. This image is then overlaid by a black gradient when there is spoken text, as to 'hide' the image.