User:Pedro Sá Couto/Prototyping 2nd

Website

RICHFOLKS.CLUB https://git.xpub.nl/pedrosaclout/special_issue_08_site

Mastodon Python API

Python scripts

My git

https://git.xpub.nl/pedrosaclout/

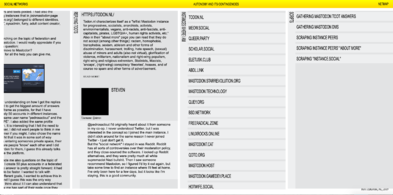

Gather Direct messages

- Based on André's code in — https://git.xpub.nl/XPUB/MastodonAPI/src/branch/master/direct-msgs.py

- https://git.xpub.nl/pedrosaclout/dms_mastodon_api

from mastodon import Mastodon

from pprint import pprint

import os

import time

import datetime

from pprint import pprint

today = datetime.date.today()

text_file = open("dms_results.txt", "a+")

text_file.write("Data collected on : "+str(today)+"\n"+"\n")

instances = ["https://todon.nl/", "https://quey.org/"]

#toots token order is also the same

#FOR EXAMPLE

#toot_id[0] goes with instances[0] and now goes with line nr1 from txt file

with open('token.txt', 'r') as token:

for n, token_line in enumerate(token.readlines()):

base_url = instances[n]

print(token_line, base_url)

# token_line is one of the token.txt

# You are reading in token.readlines() and there is no need to read again

# you can use var token_line

mastodon = Mastodon(access_token=token_line.replace('\n', ''),

api_base_url=(str(base_url)))

'''

mastodon direct messages, are the same as toots: *status updates*

With the difference that their visibility rathern than

being public / unlisted / private, are direct status updates

'''

my_credentials = mastodon.account_verify_credentials()

my_id = my_credentials['id']

my_statuses = mastodon.account_statuses(id=my_id)

for status in my_statuses:

if status['visibility'] == 'direct': # filter only direct msgs

avatar = (status['account']['avatar'])

name = (status['account']['display_name'])

bot = (status['account']['bot'])

content = (status['content'])

pprint("Avatar:" + "\n" + str(avatar) + "\n" + "\n")

pprint("Name:" + "\n" + str(name) + "\n" + "\n")

pprint("Bot:" + "\n" + str(bot) + "\n" + "\n")

pprint("Content:" + "\n" + str(content) + "\n" + "\n")

text_file.write("Avatar:" + "\n" + str(avatar) + "\n" + "\n")

text_file.write("Name:" + "\n" + str(name) + "\n" + "\n")

text_file.write("Bot:" + "\n" + str(bot) + "\n" + "\n")

text_file.write("Content:" + "\n" + str(content) + "\n" + "\n" + "\n")

text_file.close()

Gather Answers from a comment

from mastodon import Mastodon

from pprint import pprint

import os

import time

import datetime

from pprint import pprint

today = datetime.date.today()

text_file = open("answers_results.txt", "a+")

text_file.write("Data collected on : "+str(today)+"\n"+"\n")

#toots id and instances position refer to same post

#FOR EXAMPLE

#toot_id[0] goes with instances[0]

# IMPORTANT: Ensure the order of elements in toot_id and instances

# Are the same as the order of the tokens tokens.txt

toot_id = [101767654564328802, 101767613341391125, 101767845648108564, 101767859212894722, 101767871935737959, 101767878557811327, 101772545369017811, 101767981291624379, 101767995246055609, 101772553091703710, 101768372248628715, 101768407716536393, 101768414826737939, 101768421746838431, 101771801100381784, 101771425484725792, 101771434017039442, 101771437693805317, 101782008831021451, 101795233034162198]

instances = ["https://todon.nl/", "https://meow.social/", "https://queer.party/", "https://scholar.social/", "https://eletusk.club/", "https://abdl.link/", "https://mastodon.starrevolution.org/", "https://mastodon.technology/", "https://quey.org/", "https://bsd.network/", "https://freeradical.zone/", "https://linuxrocks.online/", "https://mastodont.cat/", "https://qoto.org/", "https://mastodon.host/", "https://mastodon.gamedev.place/", "https://hotwife.social/", "https://hostux.social/", "https://mastodon.social/", "https://post.lurk.org/"]

#toots token order is also the same

#FOR EXAMPLE

#toot_id[0] goes with instances[0] and now goes with line nr1 from txt file

with open('token.txt', 'r') as token:

for n, token_line in enumerate(token.readlines()):

base_url = instances[n]

print(token_line, base_url)

# token_line é uma das linhas de token.txt

# como a les em token.readlines() n precisas de ler outra ver

# e podes usar apenas a var token_line

mastodon = Mastodon(access_token=token_line.replace('\n', ''),

api_base_url=(str(base_url)))

toot_id_current = toot_id[n]

description = mastodon.instance()['description']

#print instance name and description

pprint("Instance:" + "\n" + str(base_url) + "\n" + "\n")

pprint("Description:" + "\n" + str(description) + "\n" + "\n")

text_file.write("<h3 class='instance'>" + str(base_url) + "</h3>" + "\n" + "\n")

text_file.write("<h4 class='description'>" + str(description) + "</h4>" + "\n" + "\n")

status = mastodon.status_context(id=(toot_id_current))

descendants = mastodon.status_context(id=(toot_id_current))["descendants"]

print(toot_id_current)

for answer in descendants:

pprint(answer["id"])

# geral = mastodon.status(answer["id"]))

avatar = mastodon.status(answer["id"])['account']['avatar']

name = mastodon.status(answer["id"])['account']['display_name']

bot = mastodon.status(answer["id"])['account']['bot']

toot = mastodon.status(answer["id"])['content']

text_file.write("<img src='"+ str(avatar) + "' />" + "\n" + "\n")

text_file.write("<h3 class='name'>" + str(name) + "</h3>" + "\n" + "\n")

text_file.write("<h4 class='bot'>" + "Bot: " + str(bot) + "</h4>" + "\n" + "\n")

text_file.write("<p class='answer'>" + "\n" + str(toot) + "</hp>" + "\n" + "\n")

pprint("Avatar:" + "\n" + str(avatar) + "\n" + "\n")

pprint("Name:" + "\n" + str(name) + "\n" + "\n")

pprint("Bot:" + "\n" + str(bot) + "\n" + "\n")

pprint("Content:" + "\n" + str(toot) + "\n" + "\n" + "\n")

time.sleep(2)

text_file.close()

Scrape peers description and image from an instance

# import libraries

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

import os

import time

import datetime

from pprint import pprint

import requests

import multiprocessing

from mastodon import Mastodon

with open('token.txt','r') as token:

print(token.read())

mastodon = Mastodon(access_token=token.read(),api_base_url="https://todon.nl")

peers = mastodon.instance_peers()

today = datetime.date.today()

text_file = open("scrape/results.txt", "a+")

text_file.write("Data collected on : "+str(today)+"\n"+"\n")

for n, peer in enumerate(peers):

if n < 200:

time.sleep(0.5)

# get the url from the terminal

# url ("Enter instance.social url (include https:// ): ")

url = "https://"+(str(peer))

print(peer)

# Tell Selenium to open a new Firefox session

# and specify the path to the driver

driver = webdriver.Firefox(executable_path=os.path.dirname(os.path.realpath(__file__)) + '/geckodriver')

# Implicit wait tells Selenium how long it should wait before it throws an exception

driver.implicitly_wait(5)

driver.get(url)

time.sleep(3)

print ('Instance: ', "\n", peer)

text_file.write("Instance:"+"\n"+(peer)+"\n")

try:

about = driver.find_element_by_css_selector('.landing-page__short-description')

print ('About:')

print(about.text)

text_file.write("About:"+"\n"+about.text+"\n"+"\n")

time.sleep(1)

try:

# get the image source

img = driver.find_element_by_xpath('/html/body/div[1]/div/div/div[3]/div[1]/img')

src = img.get_attribute('src')

# download the image

Picture_request = requests.get(src)

if Picture_request.status_code == 200:

with open("scrape/{}.jpg".format(peer), 'wb') as f:

f.write(Picture_request.content)

print("Printed Image")

except:

print("Impossible to print image")

text_file.write("Impossible to print image"+"\n"+"\n")

time.sleep(0.5)

except:

text_file.write("Impossible to check instance"+"\n"+"\n")

print("Status:"+"\n"+"Impossible to check instance")

time.sleep(1)

# close new tab

driver.close()

print("Closing Window")

text_file.close()

# close the browser

driver.close()

Scrapes about more page of peers from an instance

# import libraries

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

import os

import time

import datetime

from pprint import pprint

import requests

import multiprocessing

from mastodon import Mastodon

with open('token.txt','r') as token:

print(token.read())

mastodon = Mastodon(access_token=token.read(),api_base_url="https://todon.nl")

peers = mastodon.instance_peers()

today = datetime.date.today()

text_file = open("AboutMore/results.txt", "a+")

text_file.write("Data collected on : "+str(today)+"\n"+"\n")

for n, peer in enumerate(peers):

if n < 200:

time.sleep(0.5)

# get the url from the terminal

# url ("Enter instance.social url (include https:// ): ")

url = "https://"+(str(peer)+"/about/more")

print(peer)

# Tell Selenium to open a new Firefox session

# and specify the path to the driver

driver = webdriver.Firefox(executable_path=os.path.dirname(os.path.realpath(__file__)) + '/geckodriver')

# Implicit wait tells Selenium how long it should wait before it throws an exception

driver.implicitly_wait(5)

driver.get(url)

time.sleep(3)

print ('Instance: ', "\n", peer)

text_file.write("Instance:"+"\n"+(peer)+"\n")

try:

about_more = driver.find_element_by_css_selector('.rich-formatting')

print ('About more:')

print(about_more.text)

text_file.write("About more:"+"\n"+about_more.text+"\n"+"\n")

time.sleep(1)

try:

# get the image source

img = driver.find_element_by_xpath('/html/body/div/div[2]/div/div[1]/div/div/img')

src = img.get_attribute('src')

# download the image

Picture_request = requests.get(src)

if Picture_request.status_code == 200:

with open("AboutMore/{}.jpg".format(peer), 'wb') as f:

f.write(Picture_request.content)

print("Printed Image")

except:

print("Impossible to print image")

text_file.write("Impossible to print image"+"\n"+"\n")

time.sleep(0.5)

except:

text_file.write("No about more"+"\n"+"\n")

print("Status:"+"\n"+"No about more")

time.sleep(1)

# close new tab

driver.close()

print("Closing Window")

text_file.close()

# close the browser

driver.close()

Scrape instances.social

# import libraries

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

import os

import time

import datetime

from pprint import pprint

import requests

import multiprocessing

today = datetime.date.today()

text_file = open("results.txt", "a+")

text_file.write("Data collected on : "+str(today)+"\n"+"\n")

# get the url from the terminal

url = input("Enter instance.social url (include https:// ): ")

#For example, if you want to look into instances on which everything is allowed

#url = "https://instances.social/list#lang=en&allowed=nudity_nocw,nudity_all,pornography_nocw,pornography_all,illegalContentLinks,spam,advertising,spoilers_nocw&prohibited=&users="

# Tell Selenium to open a new Firefox session

# and specify the path to the driver

driver = webdriver.Firefox(executable_path=os.path.dirname(os.path.realpath(__file__)) + '/geckodriver')

# Implicit wait tells Selenium how long it should wait before it throws an exception

driver.implicitly_wait(10)

driver.get(url)

time.sleep(3)

d = 1

e = [52,102,152,202,252,302,352,402]

f = 0

i = 0

while True:

try:

driver.find_element_by_css_selector('a.list-group-item:nth-child(%d)'%d).click()

instance_url = driver.find_element_by_css_selector('#modalInstanceInfoLabel')

description = driver.find_element_by_id('modalInstanceInfo-description')

print ('Instance:')

print(instance_url.text)

text_file.write("Instance: "+"\n"+instance_url.text+"\n")

print ('Description:')

print(description.text)

text_file.write("Description: "+"\n"+description.text+"\n"+"\n")

time.sleep(0.5)

# open instance in new tab

driver.find_element_by_css_selector('#modalInstanceInfo-btn-go').send_keys(Keys.COMMAND + Keys.ENTER)

time.sleep(0.5)

#go to new tab

driver.switch_to.window(driver.window_handles[-1])

time.sleep(1)

try:

# get the image source

img = driver.find_element_by_css_selector('.landing-page__hero > img:nth-child(1)')

src = img.get_attribute('src')

# download the image

Picture_request = requests.get(src)

if Picture_request.status_code == 200:

with open("image%i.jpg"%i, 'wb') as f:

f.write(Picture_request.content)

print("Printed Image")

except:

print("Impossible to print image")

time.sleep(0.5)

# close new tab

driver.close()

print("Closing Window")

#back to original tab

driver.switch_to.window(driver.window_handles[0])

# closes pop up

driver.find_element_by_css_selector('.btn.btn-secondary').click()

time.sleep(1)

d+=1

i+=1

except:

print("This is an exception")

driver.find_element_by_css_selector('#load-more-instances > a:nth-child(1)').click()

d = (e[f])

f+=1

pass

text_file.close()

# close the browser

driver.close()

WeasyPrint

GUETTING STARTED

https://weasyprint.readthedocs.io/en/latest/install.html

https://weasyprint.org/

CHECK THE WIKI PAGE ON IT

http://pzwiki.wdka.nl/mediadesign/Weasyprint

SOME CSS TESTED FOR WEASYPRINT

http://test.weasyprint.org/

Taking a High-DPI Website Screenshot in Firefox

FROM — https://www.drlinkcheck.com/blog/high-dpi-website-screenshot-firefox

Found an easy way to take high-DPI screenshots via the Firefox Web Console.

Open the Web Console by pressing Ctrl-Shift-K (Cmd-Option-K on macOS). Type the following command: :screenshot --dpr 2 Press Enter.

This results in a screenshot of the currently visible area being saved to the Downloads folder (with an auto-generated filename in the form of "Screen Shot yyyy-mm-dd at hh.mm.ss.png"). The --dpr 2 argument causes Firefox to use a device-pixel-ratio of 2, capturing the screen at two times the usual resolution.

If you want to take a screenshot of the full page, append --fullpage to the command. Here's the full list of arguments you can use:

--clipboard Copies the screenshot to the clipboard --delay 5 Waits the specified number seconds before taking the screenshot --dpr 2 Uses the specified device-to-pixel ratio for the screenshot --file Saves the screenshot to a file, even if the --clipboard argument is specified --filename screenshot.png Saves the screenshot under the specified filename --fullpage Takes a screenshot of the full page, not just the currently visible area --selector "#id" Takes a screenshot of the element that matches the specified CSS query selector

Unfortunately, there isn't (yet?) a command for taking a screenshot at a specified window or viewport size. A good workaround is to open the page in Responsive Design Mode in Firefox:

1. Activate Responsive Design Mode by pressing Ctrl-Shift-M (Cmd-Option-M on macOS). 2. Adjust the screen size to your liking. 3. Take a screenshot as described above.

FULL LINE TO INPUT IN CONSOLE:

:screenshot --fullpage --dpr 2 --file --filename screenshot.png