User:Manetta/media-objects/annotated-training-datasets: Difference between revisions

No edit summary |

|||

| Line 72: | Line 72: | ||

* commercial buildings, shops, markets, cities, and towns | * commercial buildings, shops, markets, cities, and towns | ||

-------------- | |||

The SUN research project trains algorithms with the dataset, and their first results show the following accuracy : | |||

* riding_arena → 94% | |||

* sauna → 94% | |||

* sky → 92% | |||

* wave → 90% | |||

* car_interior/frontseat → 88% | |||

* pagoda → 88% | |||

* volleyball_court/indoor → 86% | |||

* tennis_court/indoor → 86% | |||

* underwater/coral_reef → 84% | |||

* bow_window/outdoor → 82% | |||

* cockpit → 80% | |||

* limousine_interior → 80% | |||

* rock_arch → 80% | |||

* squash_court → 80% | |||

* florist_shop/indoor → 78% | |||

* pantry → 78% | |||

* ocean → 76% | |||

* skatepark → 76% | |||

* electrical_substation → 74% | |||

* oast_house → 74% | |||

* oilrig → 74% | |||

* parking_garage/indoor → 74% | |||

* podium/outdoor → 74% | |||

* subway_interior → 74% | |||

Revision as of 14:28, 28 May 2015

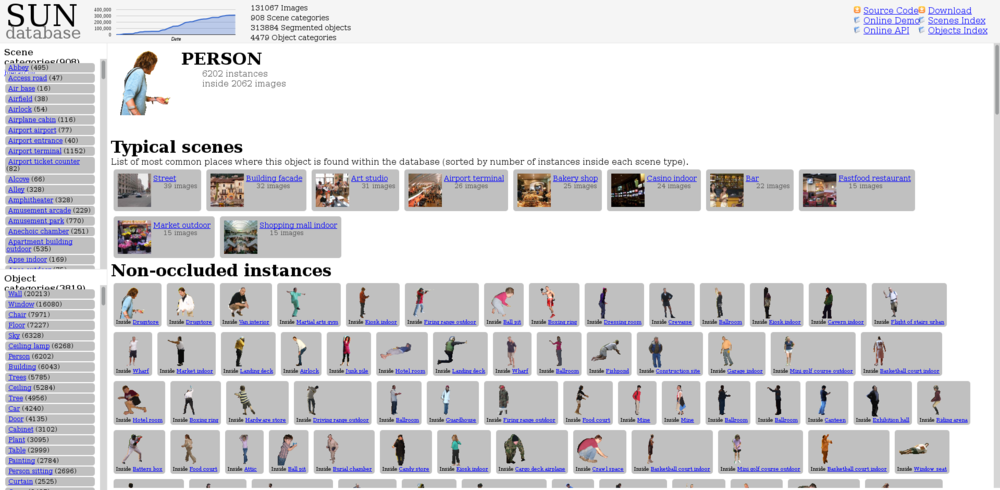

SUN database

Training sets of images --> visual mining.

"The goal of the SUN database project is to provide researchers in computer vision, human perception, cognition and neuroscience, machine learning and data mining, computer graphics and robotics, with a comprehensive collection of annotated images covering a large variety of environmental scenes, places and the objects within."

SUN database abstract:

"Scene categorization is a fundamental problem in computer vision. However, scene understanding research has been constrained by the limited scope of currently-used databases which do not capture the full variety of scene categories. Whereas standard databases for object categorization contain hundreds of different classes of objects, the largest available dataset of scene categories contains only 15 classes. In this paper we propose the extensive Scene UNderstanding (SUN) database that contains 899 categories and 130,519 images. We use 397 well-sampled categories to evaluate numerous state-of-the-art algorithms for scene recognition and establish new bounds of performance. We measure human scene classification performance on the SUN database and compare this with computational methods."

Images are annotated with words (using the WordNet lexicon), which creates a certain truth of the recognition algorithm. The images are the basis for a 'representational learning' system, which means that the system is trained by scanning annotated example images, and comparing them with eachother.

SUN database structure

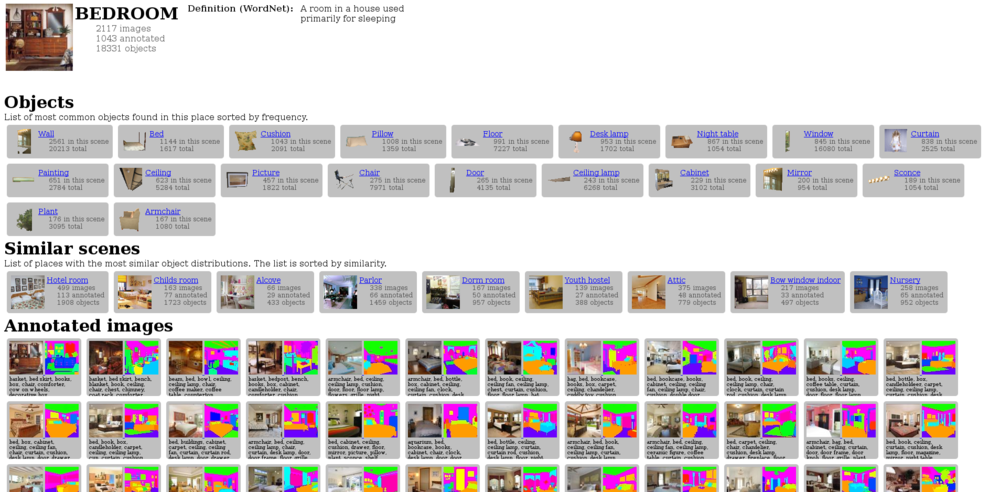

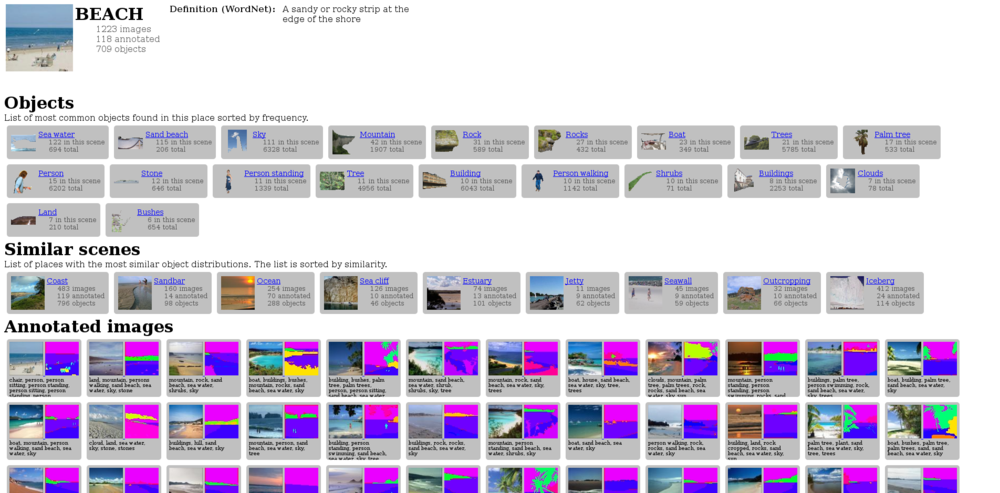

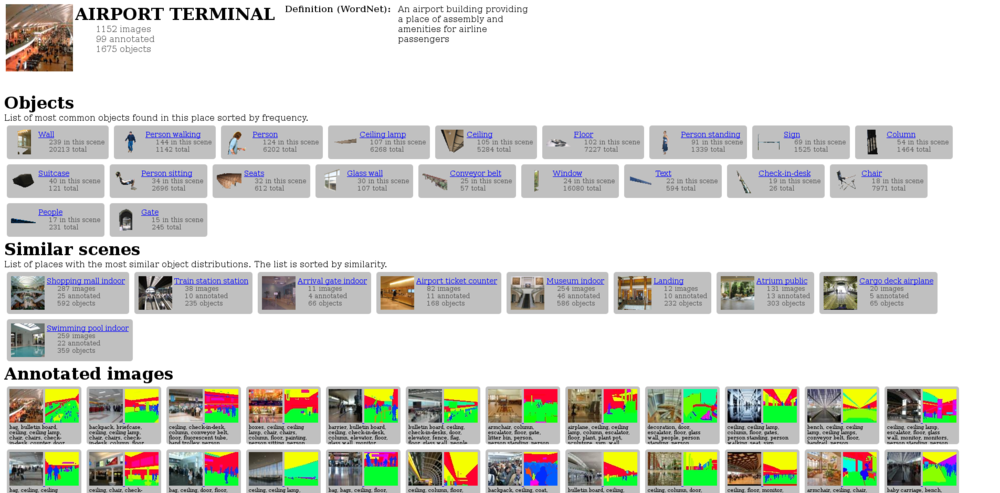

The SUN database (of Princeton University) annotates two types of images: scenes and objects. Objects are always presented with the name of the scene they appeared in.

The objects who appeared most often are:

- wall (20213)

- window (16080)

- chair (7971)

- floor (7227)

- sky (6328)

- ceiling lamp (6268)

- person (6202)

- building (6043)

- trees (5785)

The scenes who appeared most often are:

- living room (2385)

- bedroom (2117)

- kitchen (1755)

- beach (1223)

- dining room (1187)

- airport terminal (1152)

- castle (1126)

- church outdoor (1058)

- house (972)

- bathroom (956)

- playground (909)

- conference room (872)

The scenes are structured in a 3-level hierarchy tree. The two highest levels contain the following hierarchies:

- indoor

* shopping and dining * workplace (office building, factory, lab, etc.) * home or hotel * transportation (vehicle interiors, stations, etc.) * sports and leisure * cultural (art, education, religion, military, law, politics, etc.)

- outdoor, natural

* water, ice, snow * mountains, hills, desert, sky * forest, field, jungle * man-made elements

- outdoor, man-made

* transportation (roads, parking, bridges, boeats, airports, etc.) * cultural or historical buildin.place (military, religious) * sports fields, parks, leisure spaces * industrial and construction * houses, cabins, gardens, and farms * commercial buildings, shops, markets, cities, and towns

The SUN research project trains algorithms with the dataset, and their first results show the following accuracy :

- riding_arena → 94%

- sauna → 94%

- sky → 92%

- wave → 90%

- car_interior/frontseat → 88%

- pagoda → 88%

- volleyball_court/indoor → 86%

- tennis_court/indoor → 86%

- underwater/coral_reef → 84%

- bow_window/outdoor → 82%

- cockpit → 80%

- limousine_interior → 80%

- rock_arch → 80%

- squash_court → 80%

- florist_shop/indoor → 78%

- pantry → 78%

- ocean → 76%

- skatepark → 76%

- electrical_substation → 74%

- oast_house → 74%

- oilrig → 74%

- parking_garage/indoor → 74%

- podium/outdoor → 74%

- subway_interior → 74%

These organizations and structering of objects and scenes are an attempt to create an universal (visual) language that could enable algorithms to recognize any depicted scene. Algorithms that would use this dataset, will base their results on a 'truth' that is defined by this collection of images. The hierarchy-tree and most common appearing scenes and objects create a hierarchy of importance of the dataset, which influences the 'truth' of any outcome. As the outcome of a recognition algorithm would also be considered as true, the act of annotating the image-collection is a moment in between two 'truth systems' (as Femke Snelting phrased it during Cqrrelations 2015).

The scenes are accompanied with a dictionary file, in which annotaters also comment on their classification process. For example:

a — abbey --> a Christian monastery or convent under the government of an Abbot or an Abbess

'We should think about combining nunnery, monastery, priory, and abbey (all abbeys and priories are either nunneries or monasteries, and I'm not sure that those latter two are visually distinct)'

These comments show the doubts and to-do-lists that came up during the annotating process. This brought me at the idea to make an encyclopedia of consideration. Listing and publishing the comments of the annotators.