User:Anita!/Special Issue 24 notes

Making lists

Making lists as an observation method, spent the morning in the south. I was already familiar with this method, i like making lists and make them quite frequently. I made many lists, but the ones that stood out to me were:

- people i made eye contact with

- license plates with the number 4 in them by Zuidplein

- colors in the hair of a person on the metro

- things that I think of when I hear rain on my list

in the afternoon we gathered all the list titles in a pad, later in the evening i tried to draw a map connecting the lists:

Knitting city noise

Knitting city noise is a part of the Project that may or may not be made

I want to connect a digital knitting machine (that has a small computer inside of it) to a sound sensor. This sensor will react to the sounds around it (city noise) and switch the colour of the yarn that is being used, creating a distinct fabric for each event that is being listened to.

This could be done by placing the sensors in different locations, listening to the sounds of the city and looking at the codes and machines interpretation visually translated into a fabric. Using the fabrics to imagine what city experience they refer to, heavy traffic, the sound of a tram passing by, a metro announcement, wind between tall buildings, loitering, construction etc.

The ideal outcome for this would be showing the fabrics in an installation setting, showing the fabrics possibly in connection to the sounds they come from.

Why make it?

Making a visual output to city noise. Looking at noise, mixing two senses and trying to capture the sonority of being in a busy city, and how the machine perceives it through a visual output.

Also on a more personal objective perspective, practising using arduino and sensors, connecting my interest for garment making techniques and fabrics with technology

Workflow

Identifying and choosing city noise. Researching and learning about how digital knitting machines work, more specifically, looking at the brother electroknit kh-940 since it is the one available to me in the fashion station. Based on my findings, programming the code for the sensor to listen and then change the colour of yarn when it hears noise. Testing the results on the machine, making adjustments on the sensitivity of the sensor. Knitting several different fabrics based on different city noise and observing the differences between them.

Timetable

Two maybe three weeks? I feel like once the code works it should not take long to put together. It is not that ambitious of a project I think.

Rapid prototypes

Connecting the sound sensor (from prototyping class):

#define VCC 5.0 #define GND 0.0 #define ADC 1023.0

const int sensorPin = 34; float voltage;

void setup() {

Serial.begin(115200);

}

void loop() {

voltage = GND + VCC*analogRead(sensorPin)/ADC;

Serial.println(voltage);

}

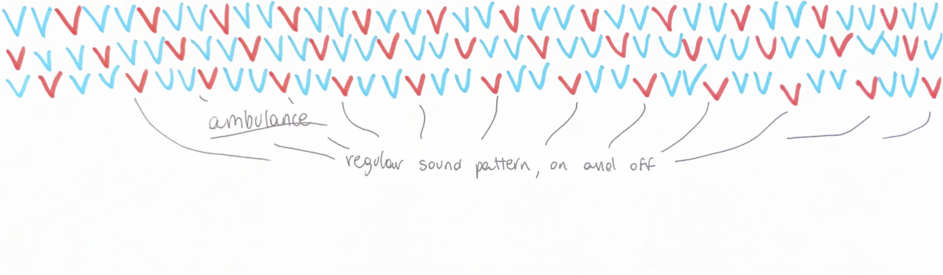

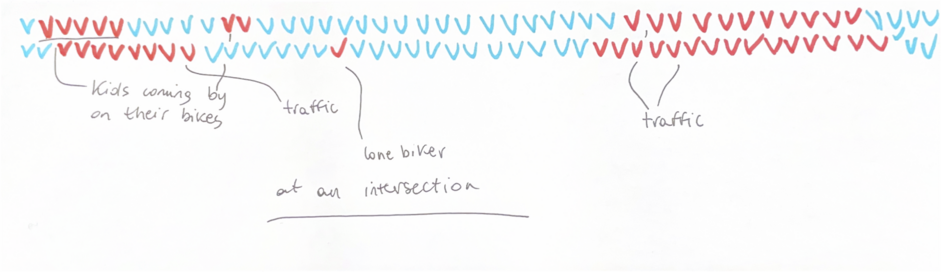

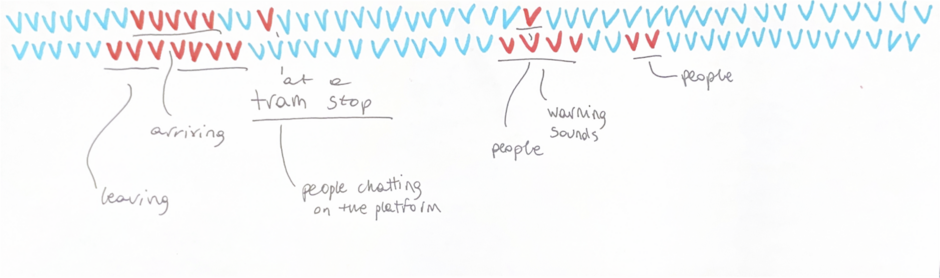

Recording quickly made on my phone on Blaak:

Sound of an ambulance passing by

Intersection noise

Tram coming, stopping and leaving

A visual (hand drawn) interpretation:

Previous practice

My practice often references and includes elements from fashion and fabric manufacturing techniques.

Relation to a wider context

Does machine find the city overwhelming? or does it find it soothing? how can this be interpreted by simply looking at fabric?

Further prototyping

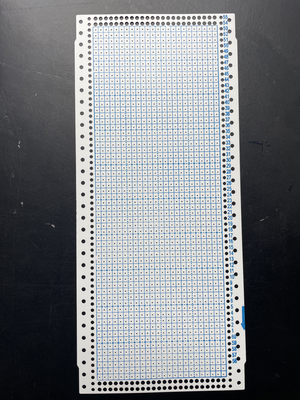

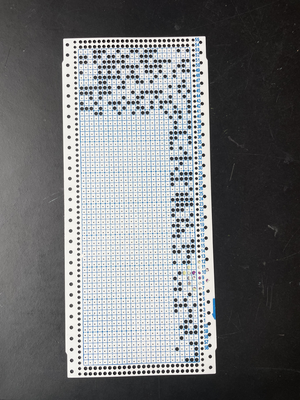

After looking into it a bit more, the 'computer' in the knitting machine is not really a computer, so I started working with the punch card machine instead, trying to write a script that will let me know, based on the nominal data received from the sensor (yes noise, no noise) which squares will be knitted in a particular colour by the machine.

Trial python script not connected to the sensor:

import matplotlib.pyplot as plt import numpy as np

rows, cols = 60, 24 data = np.random.choice(['yes', 'no'], size=(rows, cols))

binary_data = np.where(data == 'yes', 1, 0)

plt.figure(figsize=(10, 5)) plt.imshow(binary_data, cmap='gray', interpolation='none') plt.show()

Python script to transform the inputs received from the sensor into a black and white grid:

import serial import matplotlib.pyplot as plt import numpy as np import time

ser = serial.Serial('/dev/cu.usbserial-1130', 115200, timeout=1)

data = []

rows, cols = 60, 24

def read_from_arduino():

while len(data) < rows * cols:

line = ser.readline().decode('utf-8').strip()

if line:

data.append(line)

print(f"Received: {line}")

ser.close()

return data

data = read_from_arduino()

matrix = np.array(data).reshape(rows, cols) binary_matrix = np.where(matrix == 'yes', 1, 0)

plt.figure(figsize=(10, 5)) plt.imshow(binary_matrix, cmap='gray', interpolation='none') plt.show()

This is all based on a punch card i got at the fabric station.

This is the arduino code for the listening sensor:

#define VCC 5.0 #define GND 0.0 #define ADC 1023.0 const int sensorPin = 33; float voltage;

void setup() {

Serial.begin(115200);

}

void loop() {

voltage = GND + VCC*analogRead(sensorPin)/ADC;

if (voltage > 160){

Serial.println("yes");

}

else {

Serial.println ("no");

}

delay(100);

}

Also took a look at OpenSCAD, a software to 3D model with code, using a code from here to model a punch card and its pattern. I think maybe though it is easier to use the python script directly because I don't understand the code that much.

LORA network

(abandoned for now, might come back to it but right now i am more interested in exploring sound in relation to the city)

Creating a LORA network to share non linear narratives. Imagine having nodes in high points of the city, to share narrative information. Each node contains a secret, or a piece of information part of bigger story. I want to make a city wide treasure hunt, inviting the user to explore the city. A message would only be retrieved when the person is in proximity of one of the nodes, hiding information inside of the infrastructure that could overall be used for different purposes as well. It could be interesting to somehow implement sensors as well inside of this.

I am extremely new to this so honestly, I have no idea if this is even possible to do.

I want to experiment with this as a different method of storytelling with this, maybe sharing remarks about places in the city, maybe making up fictional stories about them. Navigation as a method for story listening.

Mapping through sound/silence

To me, sound is a very integral part of what makes a city a city. notice how loud a common place is. In a space or place that is public, communal, shared is so often loud. filled with people talking, announcements, music, sounds.

what is the importance of silence? and is absolute silence something i would like to look for? would silence make you uncomfortable? what does silence sound like?

i ask myself the importance of white and background noise. i travel on public transport and record and try to separate and pay attention to all the overwhelming auditory signals i receive. i hear the movement of the tram as a machine, starting and stopping or better being ok stand by. i hear the announcements the mechanical and cold voice makes, telling passengers where we are and where we are going. i hear the music bleeding from other peoples headphones. i hear conversations between colleagues and friends. i hear the sound of checking in and out. i hear the rain puttering. i hear someone zipping up their jacket, getting ready to leave. a sneeze. sighing. breathing. getting up. walking.

Follow sound and rhythm. List sound and rhythm.

For this special issue, i feel very inspired to work with sound in relation to the city. In prototyping we made a 'sound map' of sorts, recording the sounds of places, trying to guide people in a certain way without using words, focusing on the sounds that make a place recognizable: children playing, a wind chime, the ticking at a traffic light. Would it be possible to create a map exclusively relying on sound?